OpenAI just released GPT-oss, its first open-source model since GPT-2. Is it as good as they say?

OpenAI did something that even Raven Baxter couldn’t predict…

They returned to their open-source roots.

Today, OpenAI announced gpt-oss. Unlike any model we’ve seen since GPT-2, this model is completely open-source under the Apache license. Being open-source, very inexpensive, and in complete competition with themselves, you’d think that these were some cookie cutter models they stole from GitHub and released to maintain a moral high ground over DeepSeek and Meta.

You’d be wrong. These models are actually pretty fucking good.

What is GPT-oss?

GPT-oss is OpenAI’s newest large language model. It stands for Open-Source Systems, and unlike any of OpenAI’s other models (except GPT-2, released in 2019), it’s completely open-weights. That means you can download it, run it, and fine-tune it for nearly any (non-nefarious) use-case you can imagine.

Yes, even THAT use-case 😉

It consists of two models: gpt‑oss‑20B, a smaller 20-billion parameter that you can run on a decent Macbook, and gpt‑oss‑120B, a larger, more powerful model that you can run with an overpriced (but consumer-grade) GPU.

These models support 128,000 context windows, can do chain-of-thought reasoning, and have agentic capabilities.

However, unlike many other open-source models, the source code (and training data) has been not released. Their excuse for this is to prevent misuse, but in reality, it’s likely to keep their infrastructure proprietary.

Better than nothing I suppose.

How good are these models really?

The last major open-source model that was released was Llama 3, and the reaction to it wasn’t even mixed… it was piss poor.

Because llama sucked.

Even though Meta figured out how to game the benchmarks artificially and place Llama on the top of the list, nobody was fooled. On private benchmarks and real-world tasks, Llama flopped like a level 1 Magikarp using splash against the literal god of time.

Because of this, the AI community has learned not to trust private benchmarks. We collectively ask ourselves, “what can this model really do in the real world?”

So when OpenAI released GPT-oss, I decided to test it myself on two different real-world tasks.

Emphasis on real-world.

These tasks include:

- Generating complex SQL queries

- Generating a complex JSON object

Let’s start with the former.

Using GPT-oss to generate highly-complex SQL Queries

In a previous article, I’ve determined that the Gemini family is the best at generating SQL queries to complex financial analysis questions.

I’ve done this by creating a custom benchmark called EvaluateGPT. EvaluateGPT is an open-source benchmark used to test language models. It focuses on how well these models can generate BigQuery queries for complex financial questions.

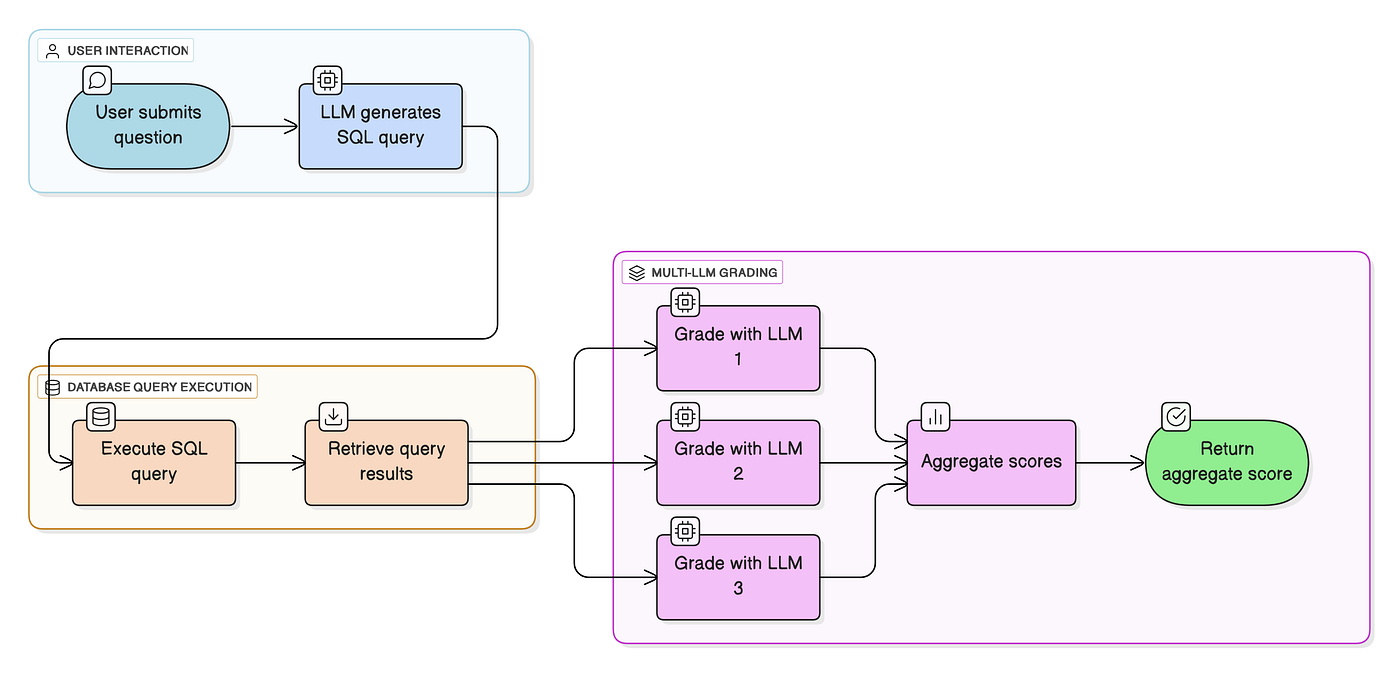

The process works as follows.

- A user submits a question to the model that we’re testing

- The LLM generates a SQL Query answers this question

- The SQL Query is executed against the database

- The query and the results are fed into 3 powerful LLMs (Claude Sonnet 4, Gemini 2.5 Pro, and GPT-4.1) and they generate a score from 0 to 1.

- The scores are averaged and we sort all of the models by their accuracy score

We then take the mean scores, median scores, and score distribution, an we create a table to compare each LLM to one another.

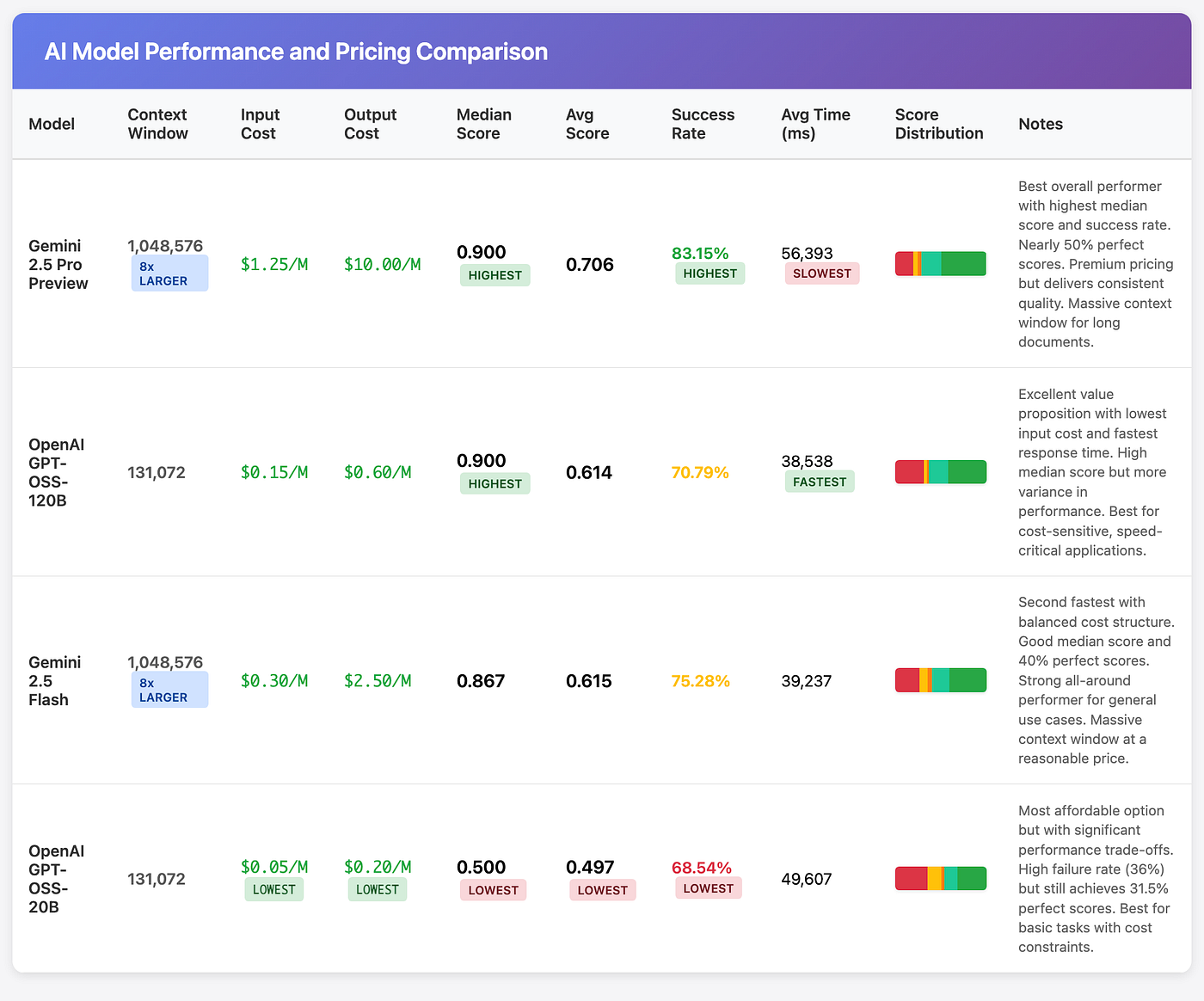

Because we’ve determined in previous articles that Gemini Flash 2.5 is better than Grok 4, Claude 4 Opus, and OpenAI O3, I’ve decided to just test the very best models. That’s:

- Gemini 2.5 Pro

- Gemini 2.5 Flash

- OpenAI GPT-oss 120B

- OpenAI GPT-oss 20B

Fully expecting Gemini to Flash to dominate like it almost always does, I ran the benchmark on the dataset of 90 questions.

The final scores shocked me.

Comparing GPT-oss to Gemini in SQL Query Generation

Shockingly, Gemini 2.5 Flash was overthrown by OpenAI! This model delivered faster speeds and higher median scores at half the input cost and a quarter of the output cost. This makes it the best model in its price range for SQL Query generation.

Gemini 2.5 Pro still dominates at the top, but with a cost that’s more than 10x more expensive per query. This is untenable for data-intensive tasks. More than that, OpenAI’s models are open-source, making it possible to fine-tune it for domain-specific use-cases such as this. For an open-source model, these results are incredible!

Now, Google still wins in the context window front, offering an unbeatable 1 million token window. However, 131,000 tokens isn’t anything to scoff at. If your goal isn’t to ingest 3 Harry Potter books in the context window, OpenAI’s oss model is more than capable.

Being a reasoning champion for a complex task, you might think that translates to every single use-case, right?

Well… you’d unfortunately be wrong.

Comparing GPT-oss to Gemini in JSON Generation

Wanting to try a wide range of tasks, I also tested GPT-oss in a less-formal but equally complex JSON generation task.

I don’t mean “generate a JSON of a dog object!” I mean something truly complex and applicable for real-world tasks.

Specifically, we’ll use it to create a deeply-nested portfolio of trading strategies. A trading strategy is simply a set of rules for when to buy or sell stocks. The schema for creating a trading strategy is absolutely massive, and it includes steps for creating conditions, indicators, and more. It’s depicted simply by the following diagram.

Unfortunately, unlike with DiagramsGPT, I don’t (yet) have a simple script for testing the model on a dataset of questions. We’ll just have to do a one-shot generation, and compare and contrast our results.

To perform this test:

- I’m going to NexusTrade.io and creating a free account

- I’m asking the AI chat to create a trading strategy

- I’ll inspect the strategy that was created manually

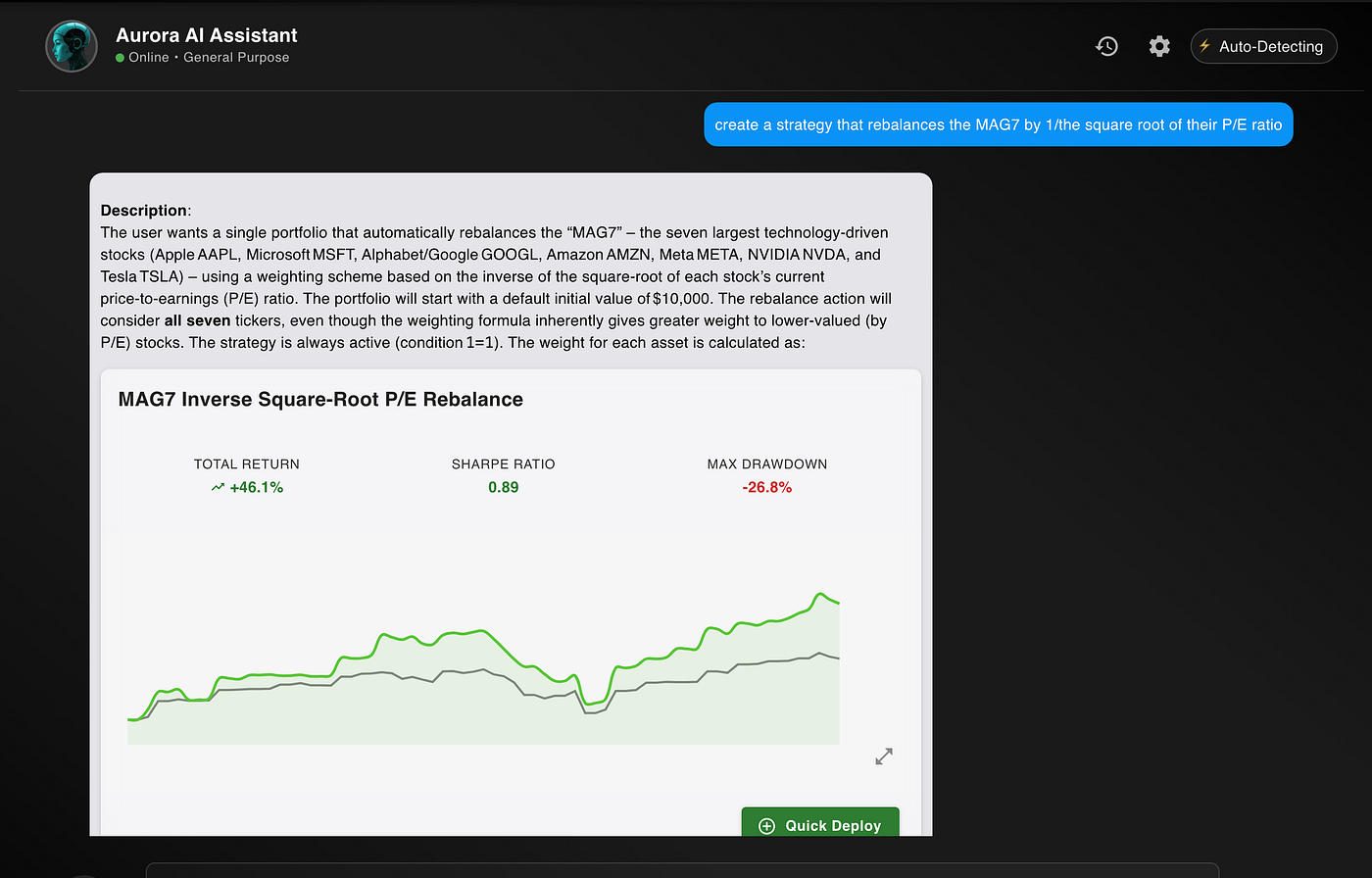

Starting with GPT-oss, we will do the following:

create a strategy that rebalances the MAG7 by 1/the square root of their P/E ratio

The GPT-model successfully generated this complex JSON object in one-shot. It’s syntactically-valid (as judged by the fact that we can see the backtest results), and it was generated fairly quickly.

Super scientific, I know.

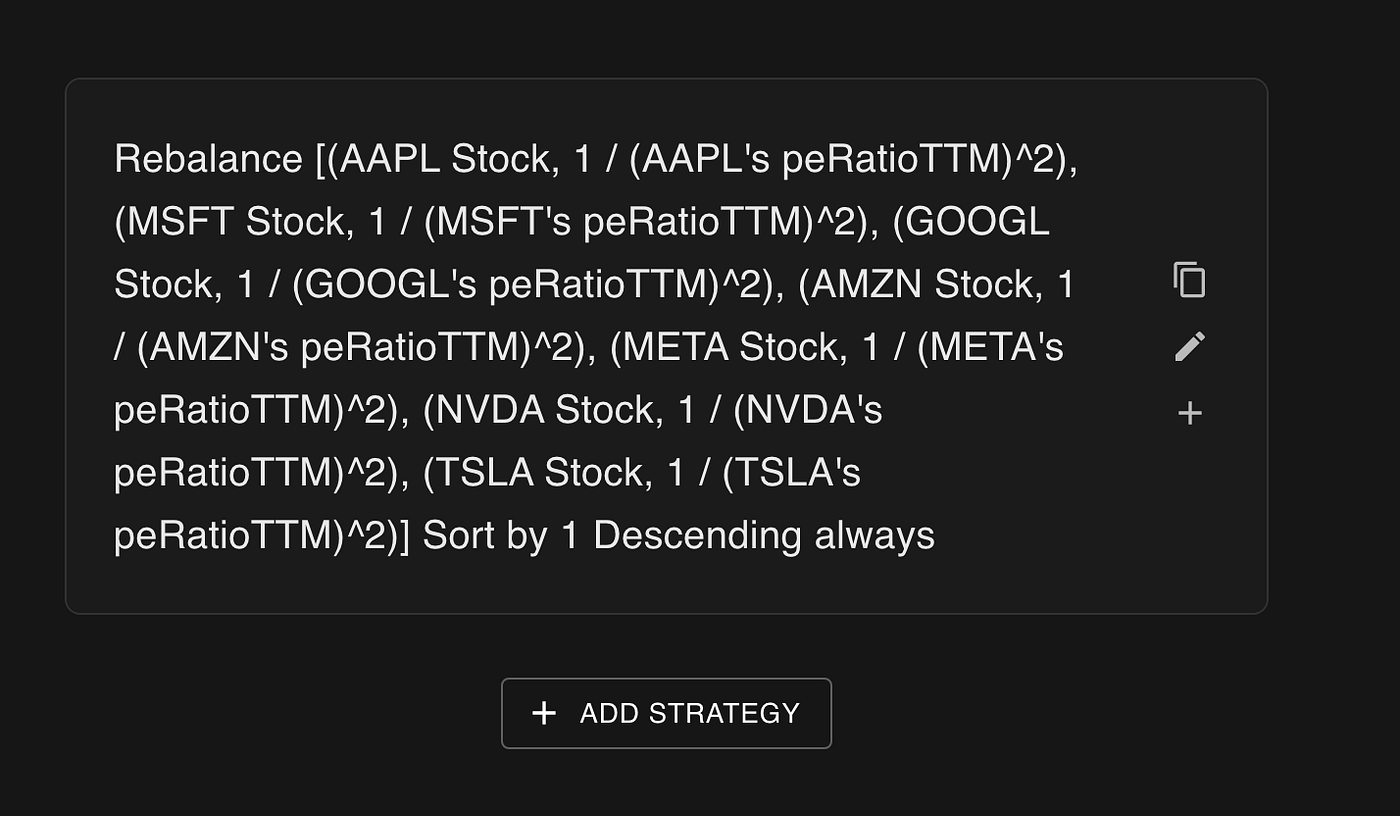

However, if we click on the strategy card and read the strategy that was generated, we notice a huge glaring issue.

The AI created a strategy called “Rebalance [(AAPL Stock, 1 / (AAPL’s peRatioTTM)²), …] Sort by 1 Descending always”

While a little bit difficult to parse, we’re basically saying we’re rebalancing this list of stocks by 1 divided by the stock’s PE ratio squared.

Squared. Not square root. The model generated a semantically-invalid indicator.

In contrast, the strategy that was generated by Gemini’s model was both syntactically and semantically correct. It decided to rebalance every 30 days instead of everyday, mostly because we didn’t give a rebalancing frequency. But for all intents and purposes, it is a valid strategy.

I tried again with another strategy example. I said the following:

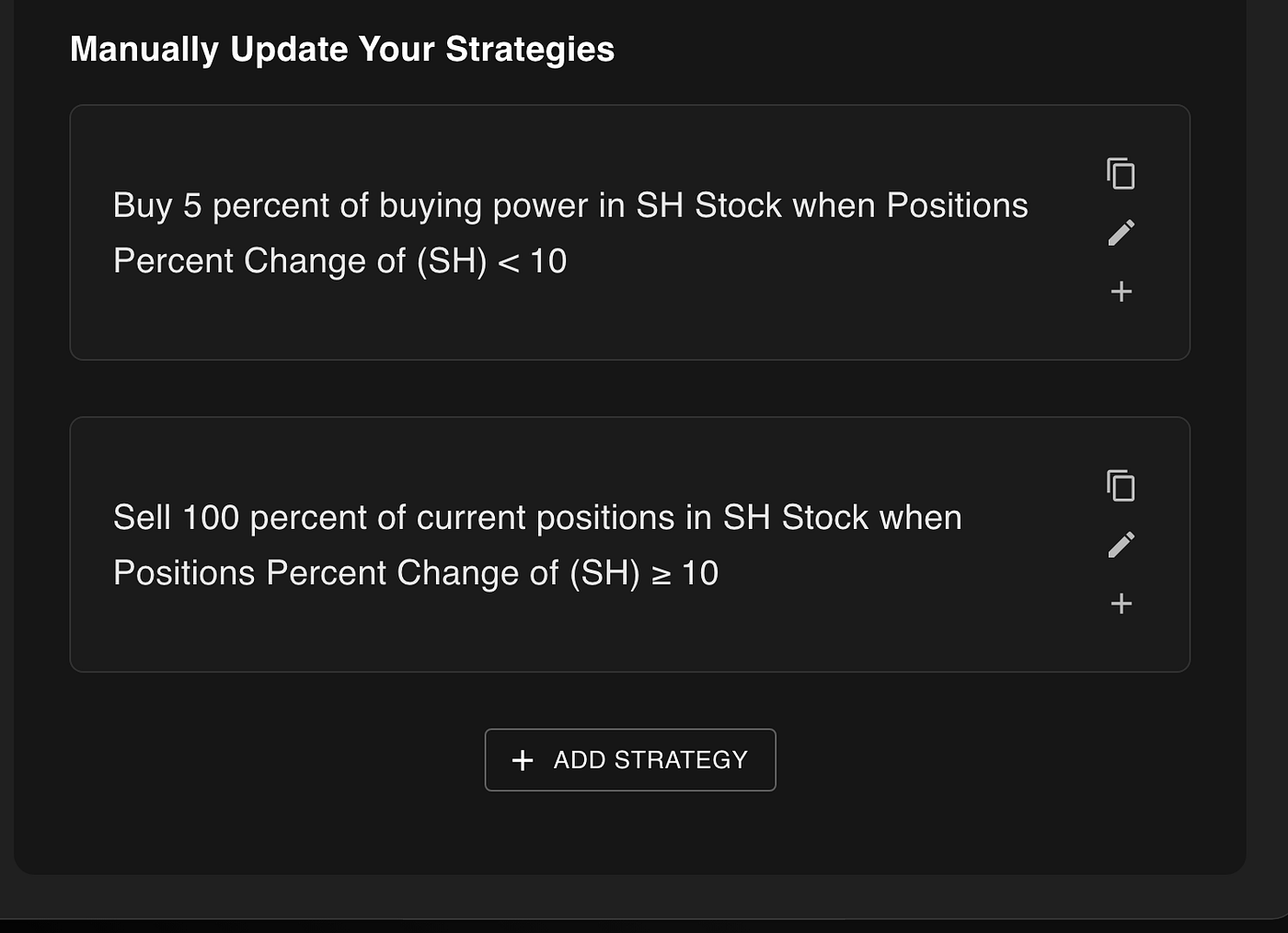

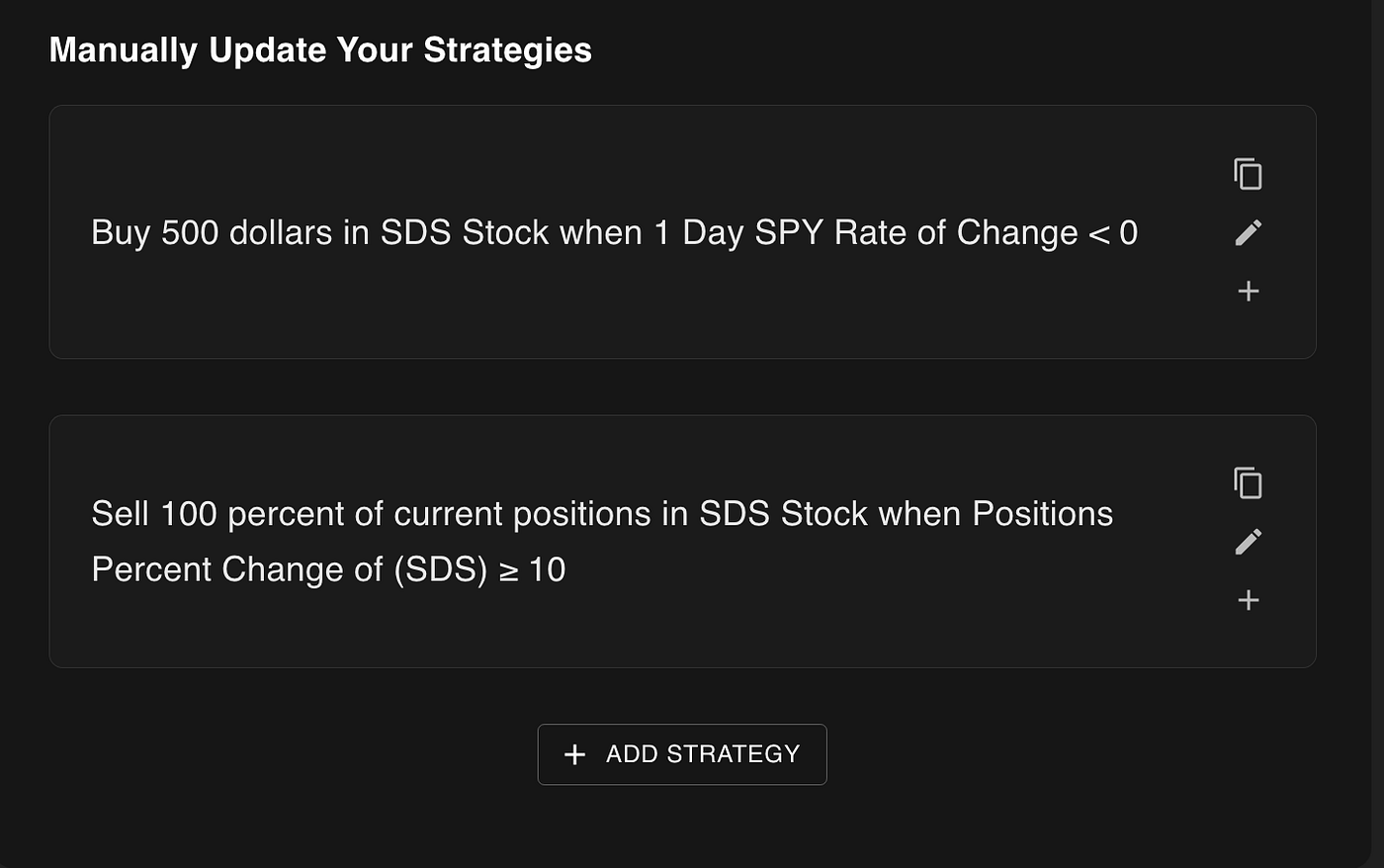

Create a strategy that buys a small amount of an inverse SPY ETFs until the positions are up 10% or more, and then sells all of the positions. the goal is to try to short the market smartly

GPT-oss generated a portfolio that didn’t correspond to what the request said. It chose to buy SH stock when the positions were down, but never made any initial buy actions.

In contrast, the strategy generated by Gemini Flash 2.5 executed buy actions, which is more aligned to what the user would want.

So in this very short, rudimentary experiment, Gemini’s closed-source model wins. What can we learn from this?

How do I know which model to use for my use-case?

This article has lots of experimentation and jargon, but chances are, you don’t care about any of that.

You want to know which model is the best.

And unfortunately, I’m going to have to give you the most annoying answer in the world to this question. Law students here it everyday in class.

It depends.

On SQL Query generation tasks, the OpenAI model scored marginally better than Gemini 2.5 Flash. It’s also more than 2x cheaper, and is able to generate highly accurate and semantically-valid SQL queries faster than any other model tested.

But it still wasn’t the very best. Gemini 2.5 Pro still reigned supreme, even though it cost 10x more and was 30% slower. Nonetheless, if your goal is accuracy at all costs, Gemini still has the better model.

But that’s not everybody’s goals for every single use-case. Some use-cases have different favorable trade-offs.

- Do you value data privacy or the ability to fine-tune your models? OpenAI’s oss wins hands-down

- Do you care about accuracy above all else, speed and cost be damned? Google’s Gemini 2.5 Pro is the clear winner

- Do you need to generate a high volume of syntactically and semantically-valid JSON objects? The answer here is ambiguous. We know that Gemini 2.5 Flash is very good at this, but with some prompt engineering and/or fine-tuning, OpenAI’s oss can likely match or excel the performance

Personally, I’m sticking with the Gemini family for NexusTrade… but just for now. Having used them for months, I know that they are reliable, accurate, and inexpensive.

While GPT-oss is much cheaper than Flash 2.5, they’re both insanely inexpensive when compared to some of the bigger models like Opus and Grok. These results are not a compelling reason for me to make the switch.

Yet.

The fact that OpenAI’s models are open-source is going to be a turning point in the world of AI. Its a significant shift in strategy, one that will be discussed for years to come. We know, at the very least, that these new open-source models, particularly the 120B parameter variant, are actually extremely good for real-world tasks, unlike Llama which only did well in the benchmarks. And, with the power of the open-source community, they are bound to get even better. Fast.

Who knows… maybe when that time comes, I’ll make a more objective benchmark to evaluate the model for JSON generation. Hell, get me to 1,000 claps, and I’ll make an update the next day.

Want to see how AI is used to help everyday investors make smarter, data-driven investing decisions? Check out NexusTrade. NexusTrade empowers the average person to learn real financial analysis and to deploy algorithmic trading strategies.

Give it 3 minutes of your time. Your grandchildren will be forever grateful.

No comments yet.