$25,000 Public Portfolio Challenge · Episode 9 · Week 1 recap

I'm up $2,546 in one week because I trusted an AI with my portfolio. What I thought would happen next, written the night week 1 ended.

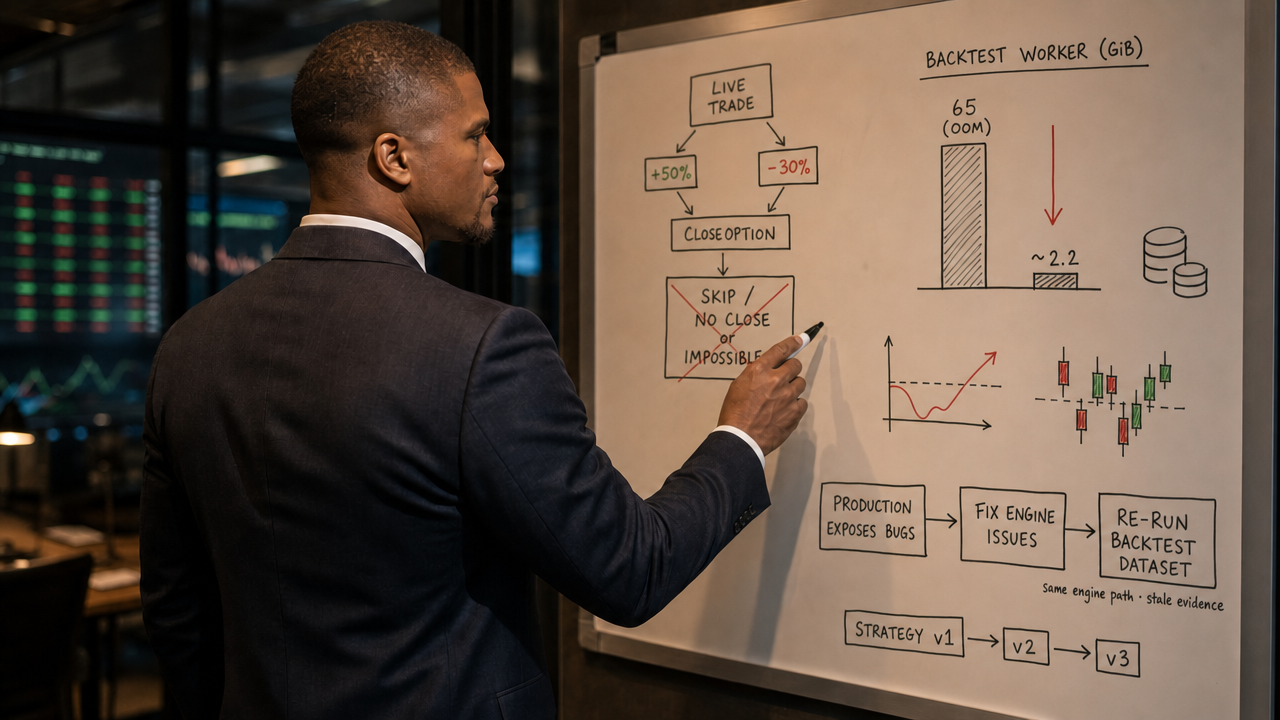

$25,000 became $27,546 while the system kept finding ways to be wrong: a broken drawdown definition, an impossible close rule, and a backtester that hit 65 GiB. The account won. The engine got rebuilt.

Sunday night, week 1 close

Ten weeks ago I made an impulsive decision.

Since October 2020, that has been the mission of everything I've built at NexusTrade. A system that could trade for me without me being in the loop on the trades themselves.

I have been paper-trading on it consistently since December 4th, 2023. Almost two and a half years.

I have over 100 different portfolios on the platform. Some with strategies that have soared beyond my wildest dreams.

And a few that cratered, with returns that would leave even the og crypto crowd in a panic-induced cold sweat.

The losers are what stopped me from taking the next step.

But then, something in me changed.

Maybe it was the fact that my app felt near "feature complete" for the first time ever.

Or maybe, I was sick of answering "it's not ready yet" when a family member asked how my little app was doing.

I decided to do something about it.

10 weeks ago, on February 28th, 2026, I impulsively declared that I would let an AI take over my portfolio. On March 3rd, 2026, I deposited $25,000 into Public and connected it with my NexusTrade account. And last week, on Tuesday, May 5th, I let the AI execute its first automated trade.

I've gained over $2,500 since then.

Two thousand five hundred dollars in a week. That's more than 10%. You can audit my returns here, and if you've been reading from the beginning, you know that I'm telling the truth.

Here is my week 1 recap of letting an AI control my portfolio.

Live book moved

This post is anchored to Sunday night, May 10, 2026 right after week 1. I have already updated the live public portfolio since then: new strategies, new risk, same transparency rule. Treat anything below that sounds like a forecast as the plan that night, not a guarantee of what you will see in the account today.

The book

Three open. One closed Day 3. Zero new entries this week.

Days 4 and 5 were quiet on the trading side. No fresh entries fired, which gave me the weekend to stop staring at the P/L and start fixing the engine underneath it.

| Spread | Ct | Net Debit | Mark | P/L | % Move | Status |

|---|---|---|---|---|---|---|

| GOOG 385 / 415 | 2 | $1,998 | $2,908 | +$1,044 | +56.0% | over close trigger |

| NVDA 205 / 230 | 3 | $2,385 | $3,405 | +$1,020 | +42.8% | pinned near +50% |

| AVGO 430 / 490 | 1 | $1,975 | $1,927 | -$48 | -2.4% | recovered from −37.7% |

| META 610 / 655 | 1 | $1,470 | $1,879 | +$409 | +27.8% | closed Day 3 |

GOOG is already past the +50% take-profit line;

NVDA is still climbing toward it.

That is where this rule is supposed to matter. The gap is mechanical:

GOOG still did not close on Friday. The book kept logging

CloseOptionSignal passes, but the

CloseOption action blocked itself, so no exit order

shipped. The Reflection at the end has the Boolean details.

AVGO bled to −37.7% on Wednesday. I had assumed the per-name 25% drawdown stop would close it.

It didn't, because the PositionMaxDrawdown indicator wasn't measuring what its name implied. Both backtests and live trading used the same definition, so the strategy was working as designed; the design just didn't match my mental model. I shipped a semantic fix Thursday that makes the indicator track position P/L drawdown directly. By Friday, AVGO had reversed on its own and the spread was back to a $48 loss instead of the $745 forced stop-out it would have been under the fixed indicator.

That recovery was luck, not design.

Version 1, before Sunday's changes

The original strategy got tighter than I wanted.

Wednesday's audit produced exactly one parameter change: position sizing dropped from 10% per fired entry to 5%.

Every spread on this week's book opened on the new sizing. That's also

why no new spreads have fired since: 5% of $25k is $1,250, and a

single 30-DTE bull-call debit spread on GOOG or NVDA costs almost that

much for one contract. The 5% cap and the

OpenSpreadCount(ticker) < 1 gate are both doing what

they were told. They're just doing it tight enough that the basket

effectively stops trading after the initial fills.

The audit ran on backtests using the old position-drawdown indicator, which I now know was measuring the wrong thing. Whether 5% still wins against the fixed indicator is one of the things Episode 10 will tell me.

That is the system that ran the first week: four entries, four per-name drawdown stops, one global close ladder, one risk overseer, one idea scout. Eleven strategies in version 1.

That Sunday night, the live portfolio was already moving beyond that. Two new option-entry strategies were staged for AMD and MU, and a Monday review agent had been added on top. The count was already fourteen. That is the point of this challenge: the live system changes as the evidence changes.

Sunday night

Version 1 ran that week. Then I started generating version 2.

That Sunday night I started Aurora on version 2 in another tab. The output gets the full reveal in Episode 10.

The weekend

The next version needs better evidence. That starts with a cleaner backtest engine.

Next week I want to ground the strategy work in historical evidence instead of intuition. That means rerunning every backtest in the database on the most up-to-date engine, then using the results to generate candidate strategies aligned to my watchlist and basket.

The engine could not handle the workload.

The first time I pointed it at an options backtest the size of what I want to run (a 4,547-stock dynamic universe, 5 years of daily bars, and option-chain lookups for each candidate), it ran out of memory at 65 gigabytes and got killed by the OS.

Here is what I did.

(a) Lazy option chain loading

Dynamic option strategies were eagerly loading 2.9 million option rows up front, before the simulation even started.

I replaced that with a demand-driven loader. Chains get fetched only when a tick actually needs them, with bulk-fetch per cold underlying and per-day caching.

Cloud canary: max RSS 31 GB → 12.4 GB. Bit-exact parity with the old eager path, proven by a commit-by-commit cloud bisect. Cost: 106 seconds slower per run. Memory win, not a speed win.

(b) Raw underlying prices from daily bars, not intraday

After (a) shipped, the canary still ran out of memory.

New diagnosis: the option-chain load was now zero, but the engine was loading the entire intraday stock-price parquet just to look up raw underlying prices. It needs raw prices because option strikes are quoted in raw dollars, while the daily price feed is split-adjusted. Fix:

raw_price = adjusted_daily_price / SplitCurve::factor_at(ts)

Eight new unit tests cover NVDA's 10:1, AVGO's 10:1, GOOG's 20-for-1, and a no-split passthrough. Mag7 load-only repro before/after:

| Metric | Before | After | Delta |

|---|---|---|---|

| Memory used | 19.7 GiB | 1.85 GiB | 10.7× smaller |

| Time to run | 97.8s | 14.1s | 6.9× faster |

| Backtest result | – | – | bit-exact match |

Then the same change against the full 3,410-stock universe: 2.22 GiB of memory.

One-thirtieth of the 65 GiB that killed the engine on Friday.

(c) Cache and infrastructure cleanup

Capped the in-process parquet cache at 1 GiB on every backtesting and optimizer worker.

Deleted the legacy intraday-stock loading path entirely. Rollback now requires a code revert, not a runtime toggle.

Stood up a cloud A/B canary so the next memory regression of this shape gets caught the same way this one did.

The week, in three numbers

65 GiB out-of-memory crash → 2.22 GiB on the same workload. 97.8s → 14.1s on the load test. Bit-exact result parity through both changes. The engine is now ready for a test it could not survive last Monday.

Reflection

The account made money. The system showed me where it was weak.

That is the actual week 1 result. Not just +$2,546. Not just three open spreads and one realized winner. The useful part is that real money forced the system to reveal what paper trading let me ignore.

AVGO exposed a definition problem.

PositionMaxDrawdown sounded like it measured position P/L

drawdown. It did not. Backtests and live trading shared the same

definition, so the strategy was internally consistent. It just was not

measuring what I thought it was measuring.

GOOG exposed a close-logic problem.

The spread was over +50%, but the take-profit and stop-loss filters

sat on the same CloseOption action and were checked in

sequence. To close, the spread effectively had to be above +50% and

below −30% at the same time. Impossible. The important part here

is that backtests and live trading shared that code path, so this was

not a live-only bug. It was an engine bug.

All of these bugs are fixed now.

It is worth naming the counterfactual. With a broken drawdown indicator and an impossible close rule both live on the book, a market that moved the wrong way this week would have made this a very different post. NVDA, META, and GOOG all rallied. AVGO reversed before the broken stop ever had to be honored. The +10% week is real, and the engineering was real, but the gap between "design worked" and "tape was kind" is smaller than the headline suggests.

The uncomfortable part is that fixing them changes the evidence base. A strategy that looked good under the old drawdown indicator and the old close logic might not look as good under the fixed engine. The historical backtests I used to create version 1 were testing something different than what I thought I had designed.

So I am rerunning the numbers.

Version 1 made money. Version 1 also taught me that the next strategy has to be built on cleaner backtests, corrected close semantics, and explicit handling of the positions already on the book.

Aurora is generating that version now. Whatever it returns, I am showing the backtests, the rules, and the trade decisions.

If it clears the gates, it deploys to the live portfolio. If it doesn't, the next episode says why. The point of this challenge was never to write a clean victory lap after the fact.

Episode 10 goes one level wider.

The 100K-backtest article from last year ran on whatever strategies users had submitted: an unconstrained universe.

The question now is different: given my watchlist of 16 names and the current 4-name basket, what option strategies survive the same gates? Sortino > 1.5. Beats SPY. Max drawdown better than −50%. At least 10 trades.

Same engine. Same statistical rigor. Different scope: candidates aligned to the names I actually want to trade.

The output is a ranked list of options strategies on my universe, with confidence intervals, that I can deploy directly. That is what Episode 10 is about.

You can subscribe to the Public Portfolio Challenge and follow the live portfolio to see the changes as they happen.

Week 2 is where the experiment stops being a launch story and starts becoming a feedback loop.

The

NexusTrade MCP server

gives Claude Code and Cursor the same

fetch_portfolios,

backtest_portfolio, and event-query

tools I used this week.

No comments yet.