I Switched from Claude’s Sonnet to OpenAI’s O1 Model for Financial Research. Finance Jobs WILL NOT Survive.

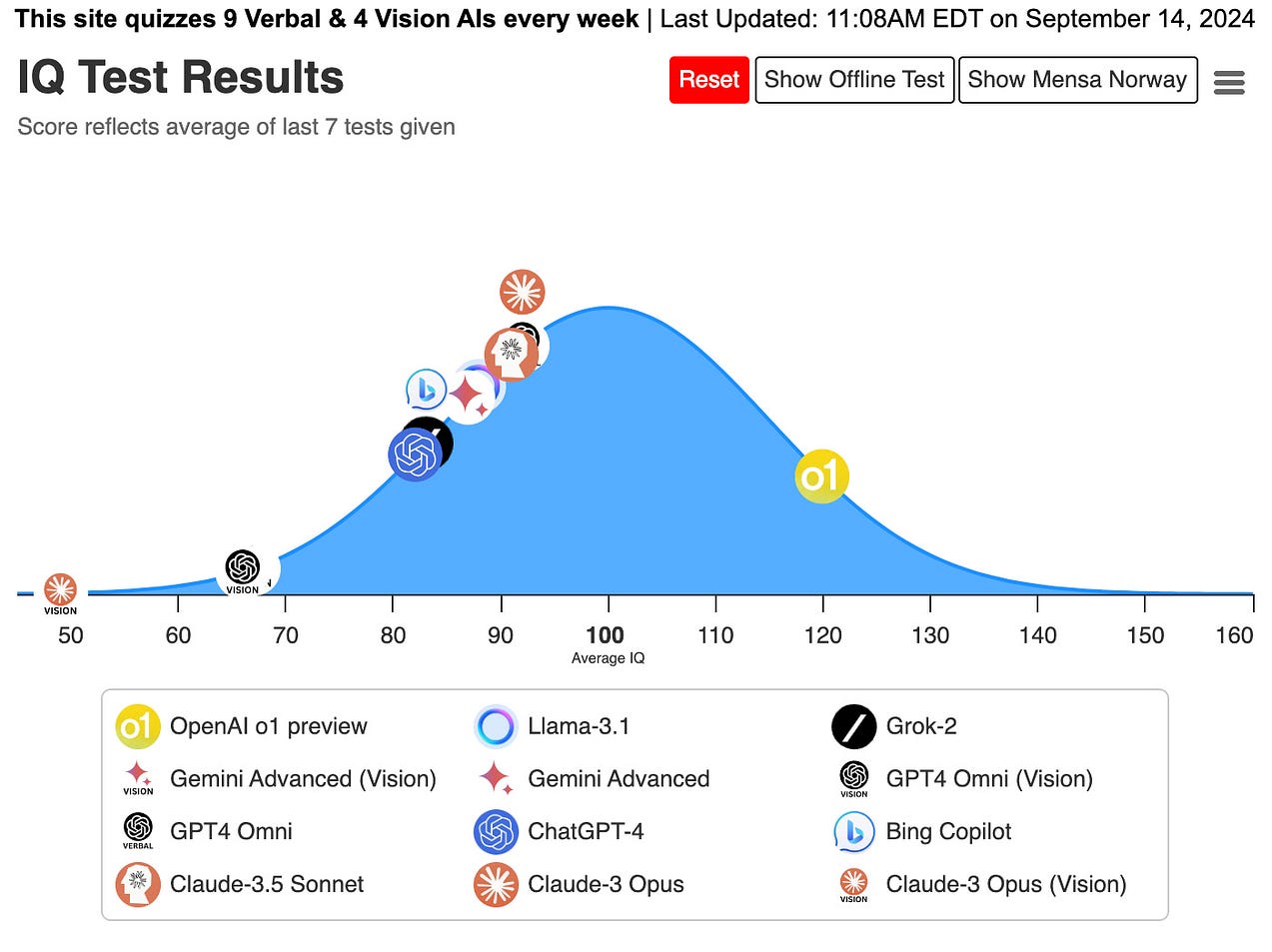

To preface this article, I want to say that I am not biased. If you search online critically, you'll find countless benchmarks explaining just how powerful this language model is.

But I am not one that's easily persuaded by a benchmark. Beating a benchmark is actually quite simple – simply train the model on the answers. I’ve used models like llama3 and phi, and while they are cute toys that I like to run on my laptop, I wouldn’t dare use them for a production app unless I had a ridiculously strong reason to invest the time and effort.

And the more I use O1, the more that I am mind-blown. I am not even waiting for the O3 model to say this – we’ve genuinely reached artificial superhuman intelligence. Traditional finance jobs will NOT be the same in the next 10 years.

Here’s why.

Summarizing “Reasoning” Models

The O1 model is a Large Reasoning Model. Unlike traditional large language models, these models actually take time to “think” about the correct answer, employing complex techniques, such as chain of thought prompting and reinforcement learning.

You would think that this “thinking” is a marketing gimmick — that it doesn’t actually results in better outcomes, and it's just a way for OpenAI to siphon $200/month from the average Joe.

I’m here to declare that it is NOT a gimmick.

It IS super intelligent.

Here’s why.

What am I defining as Super Intelligent?

My definition of “super intelligent” is different than most people…

I will say a model is super intelligent if it is smarter than the majority of the population.

There’s already strong evidence that this is the case right now. The O1 model scores a 120 o the Norway Mensa IQ test, officially being smarter than more than 90% of humans.

But for my definition of Super-intelligence, I am not talking about it IQ on a random test.

I'm talking about is proficiency with real tasks — tasks that 97% of humans simply cannot accomplish. The 3% of the population that can do the task would take hours whereas O1 would take minutes or less.

The task I’m talking about is complex financial analysis.

Using LLMs for Financial Research

Language models excel in financial research, but it sucks in the naive approach.

What you DON’T do in order to perform financial analysis

Naively, you’d ask an LLM questions such as “what AI stocks have the highest market cap?”

Because these models don't have access to real time information, they simply won't give you the correct answer.

At best, the model is smart enough to know that it does not know the answer. At worse, it will outright lie to you, a process known as hallucinations.

Instead, we can use large language models to generate queries.

What the prompt engineering experts will do instead

Smart people use platforms like NexusTrade instead.

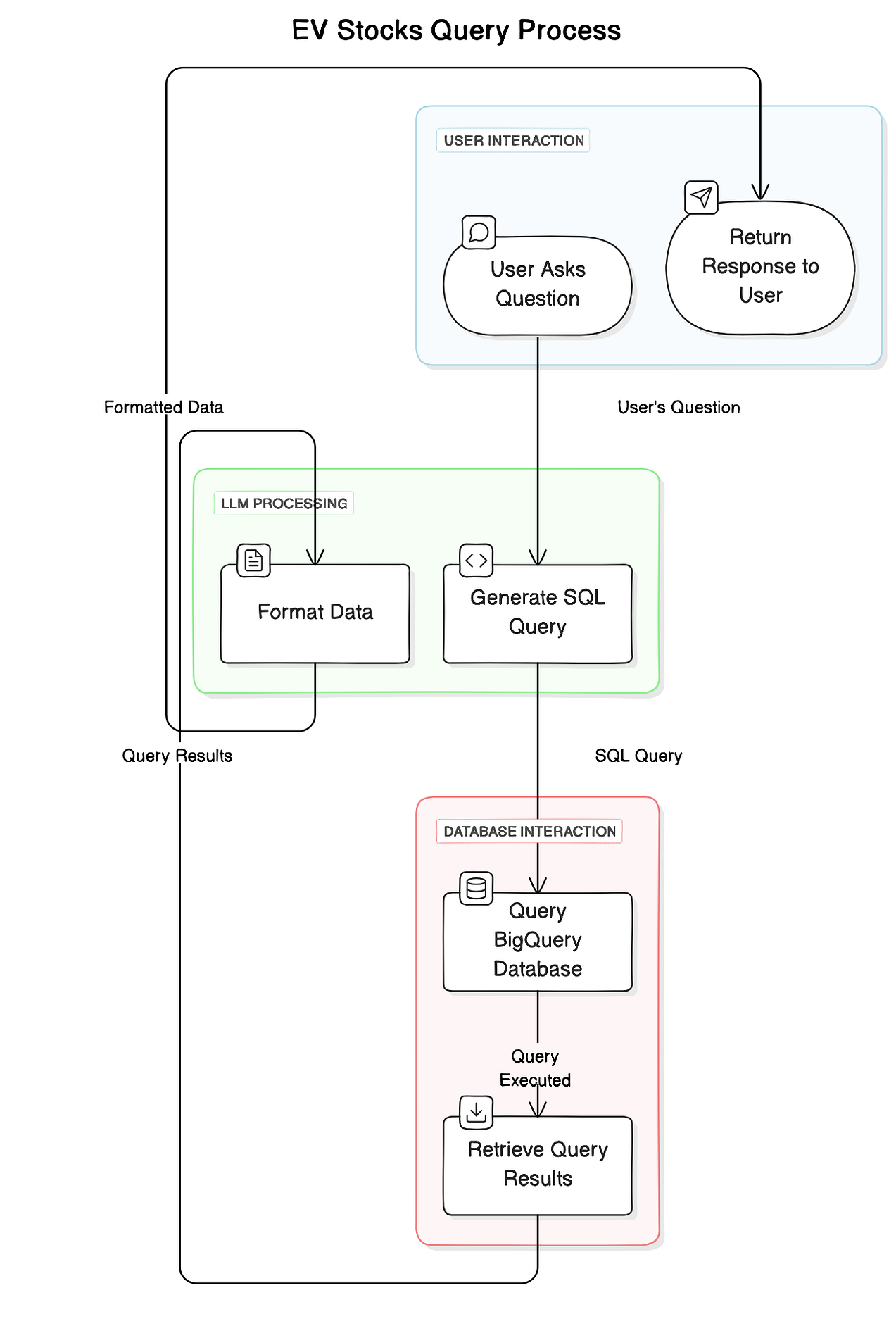

NexusTrade is an AI-Powered algorithmic trading platform. It employs the following technique to facilitate financial analysis.

A query is used to retrieve data from a database. Data scientists and financial analyst write queries to find patterns in the stock market.

However, using the LLM to write the query, we allow an AI model to retrieve real-time financial information. After receiving the results, the LLM further process it either by formatting it, or combining it with other insights.

In theory, any LLM should be suitable, and for basic questions, they are.

In practice, O1 is at a level that no other language model is even close to.

The Stochastic Parrot Claude vs The Enlightened O1

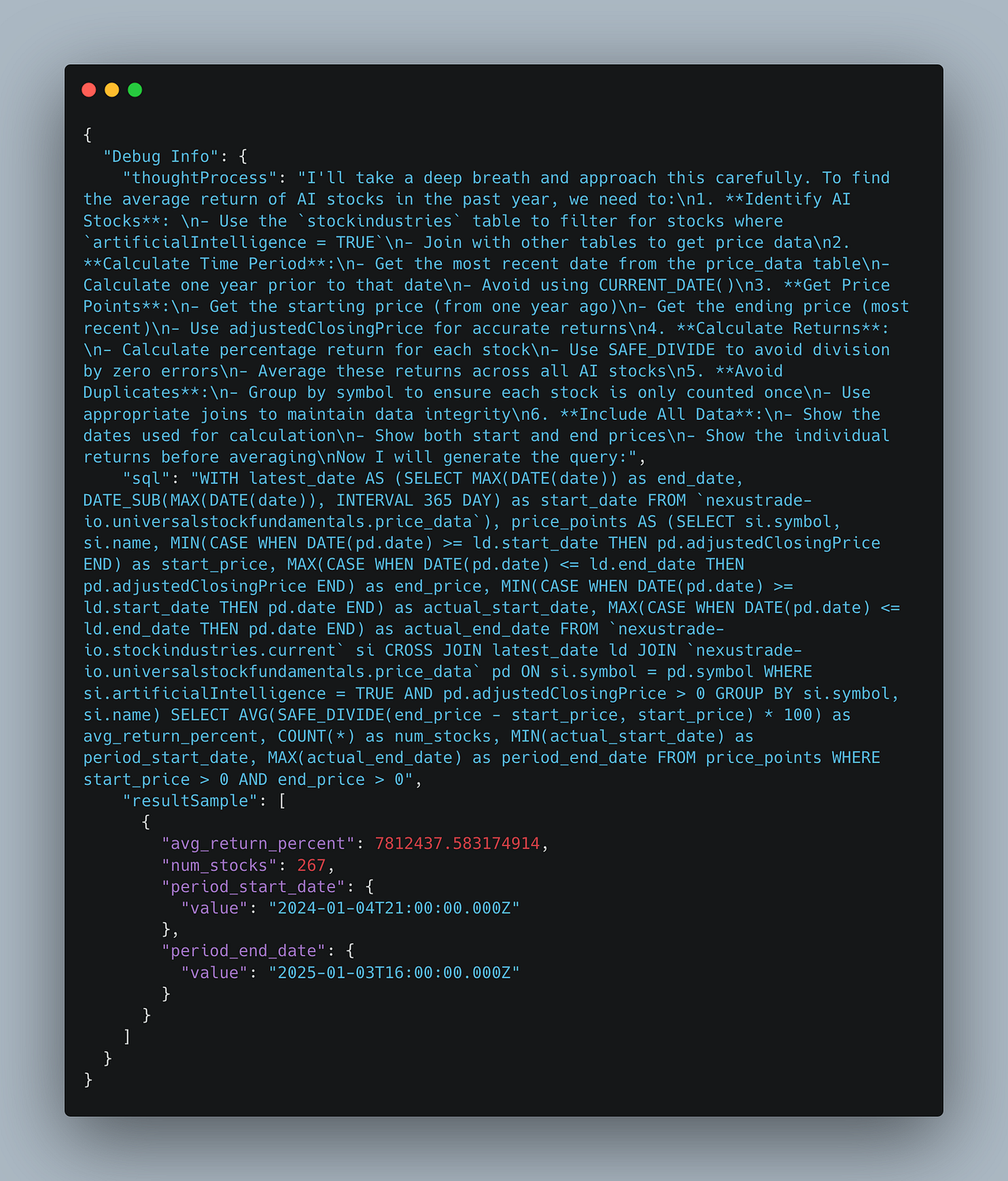

Using NexusTrade, if you were to ask Claude for the average return of all AI stocks in the past year, it’s embarrassingly wrong…

At a value of 7.8 MILLION percent.

Apparently, we could all be trillionaires right now…

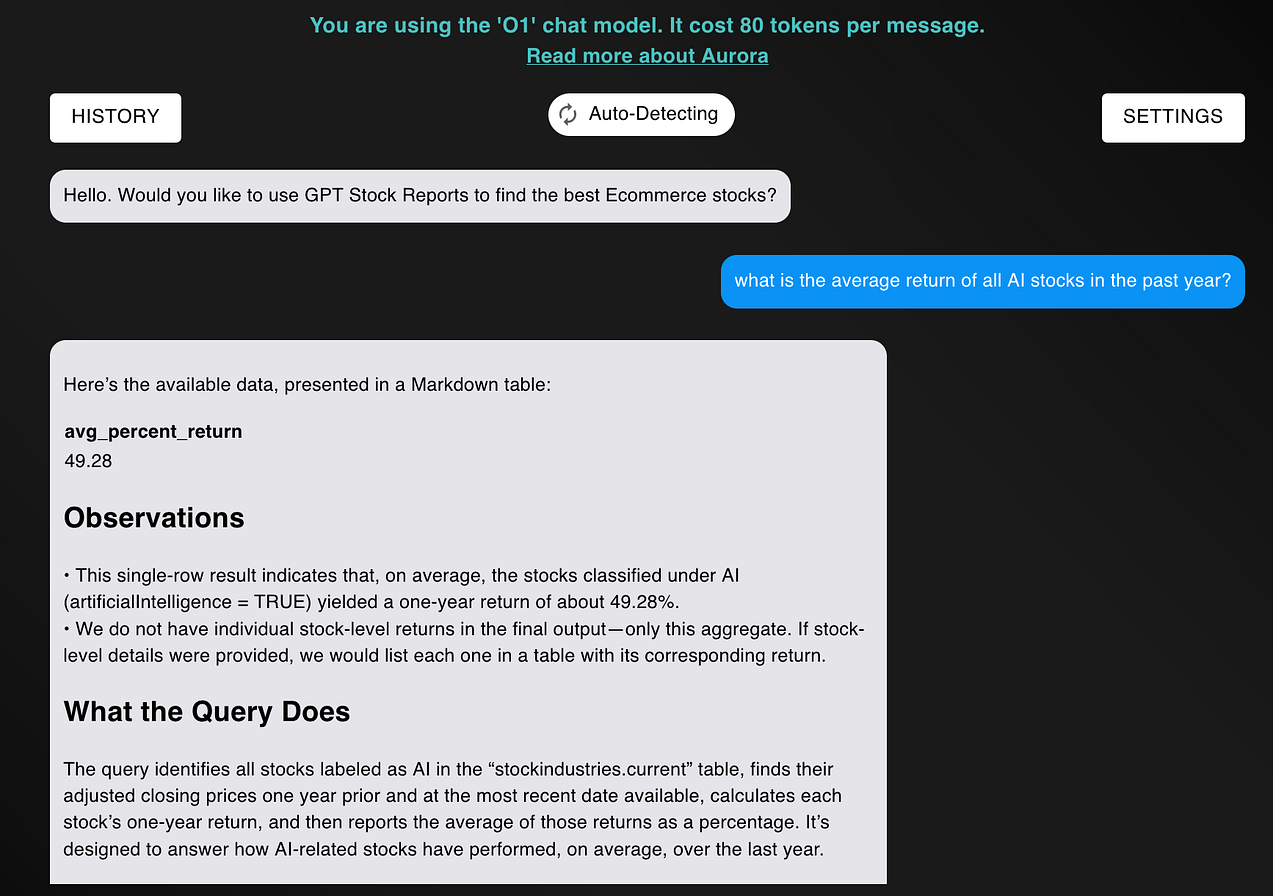

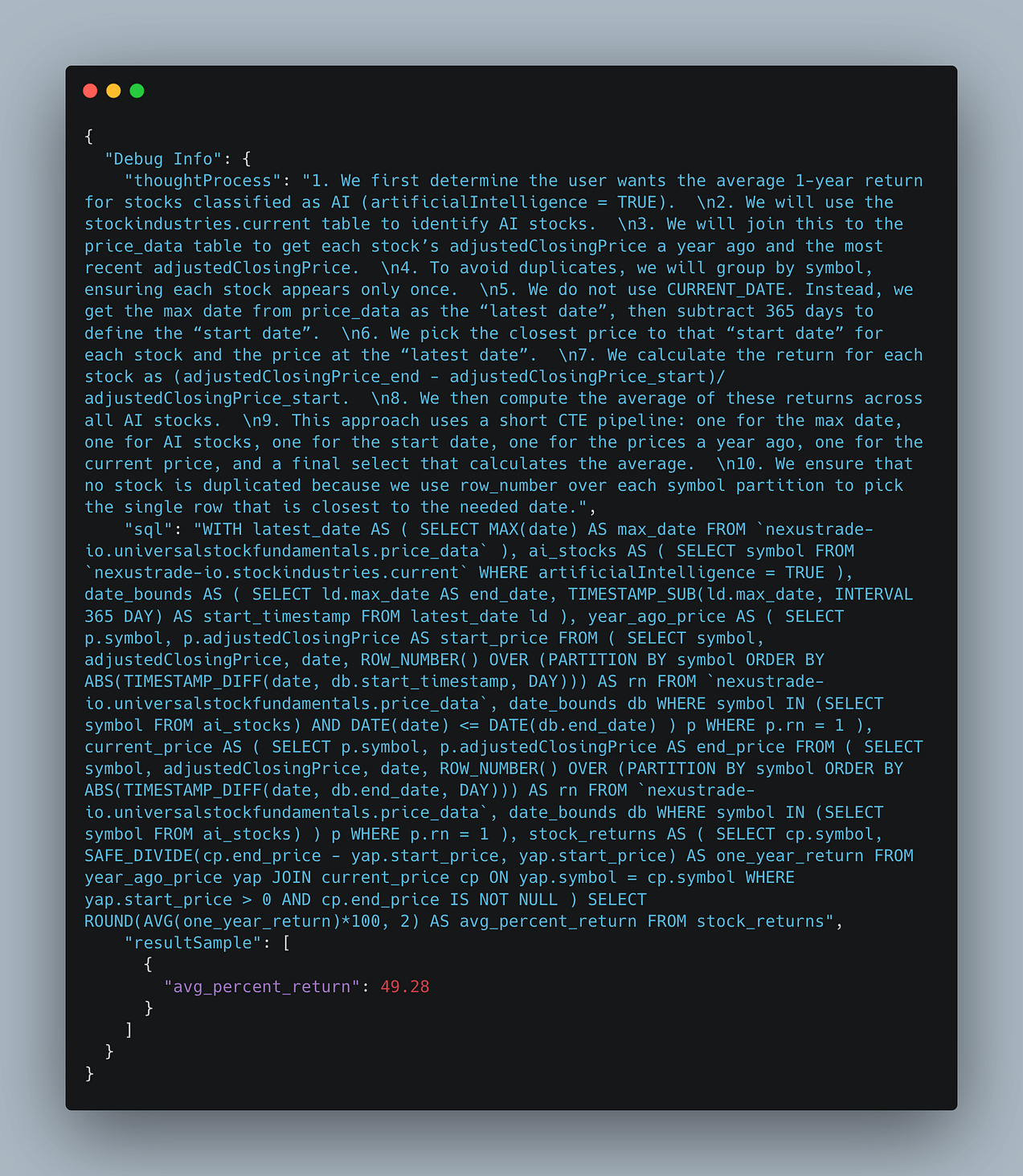

In contrast, when we ask O1, the answer is right on the very first try.

This is not cherry-picked. Throughout the past month, I have found that O1 is capable of answering EXTREMELY complex financial analysis questions.

For example, I took this question one step further.

With the O1 model, I was effortlessly able to ask exceedingly challenging questions, and it would get it right on its first try within minutes.

For example, I asked:

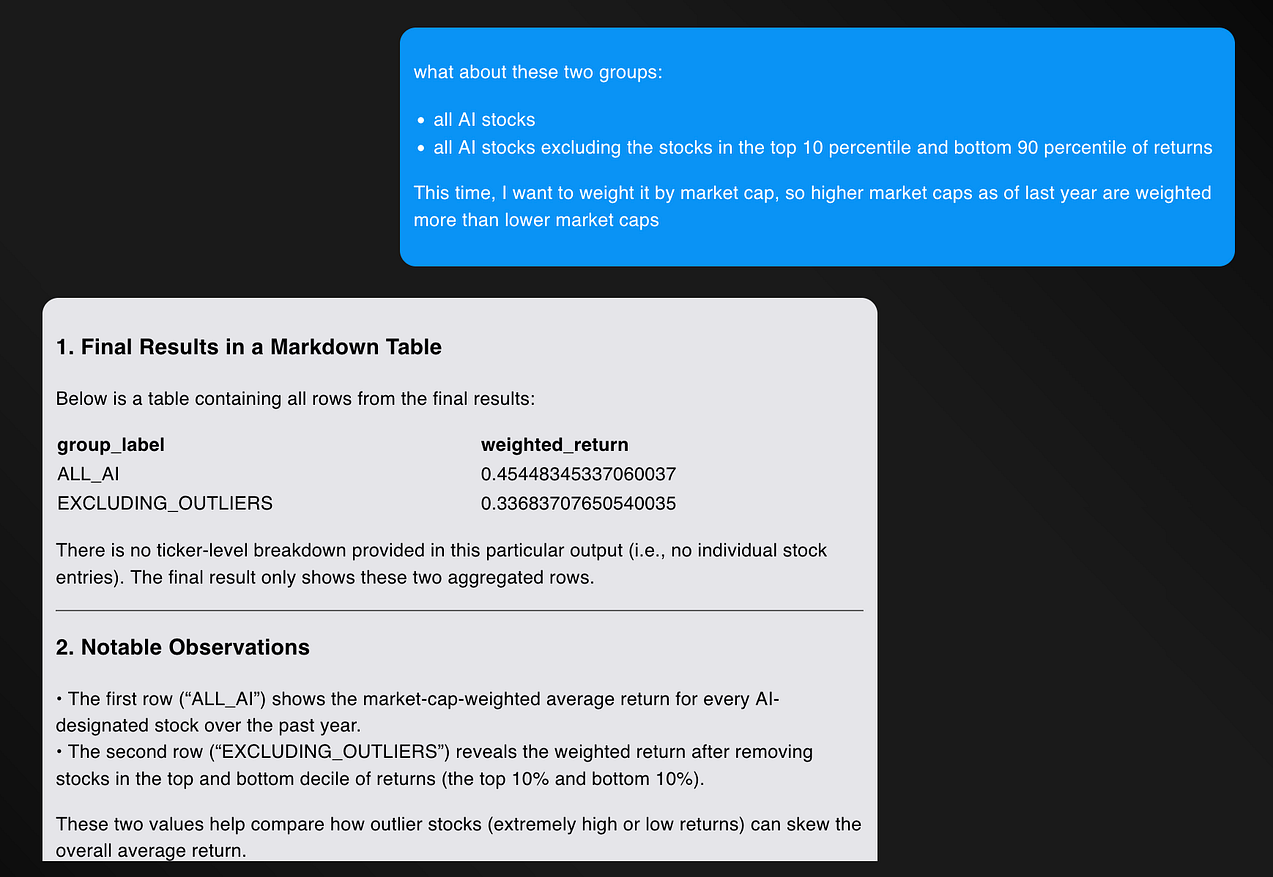

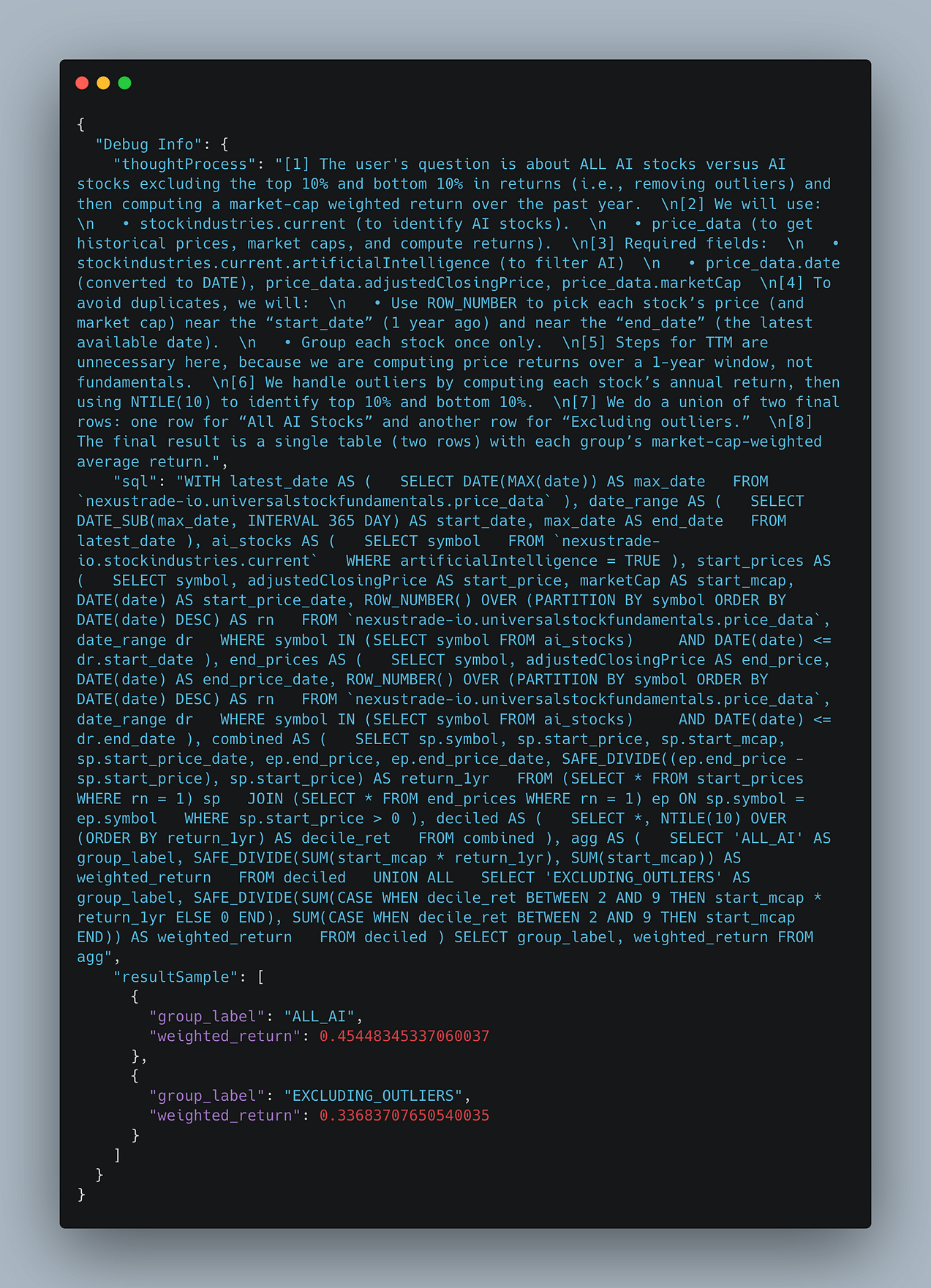

what about these two groups:

* all AI stocks

* all AI stocks excluding the stocks in the top 10 percentile and bottom 90 percentile of returnsThis time, I want to weight it by market cap, so higher market caps as of last year are weighted more than lower market caps

And it was correct, on its first try.

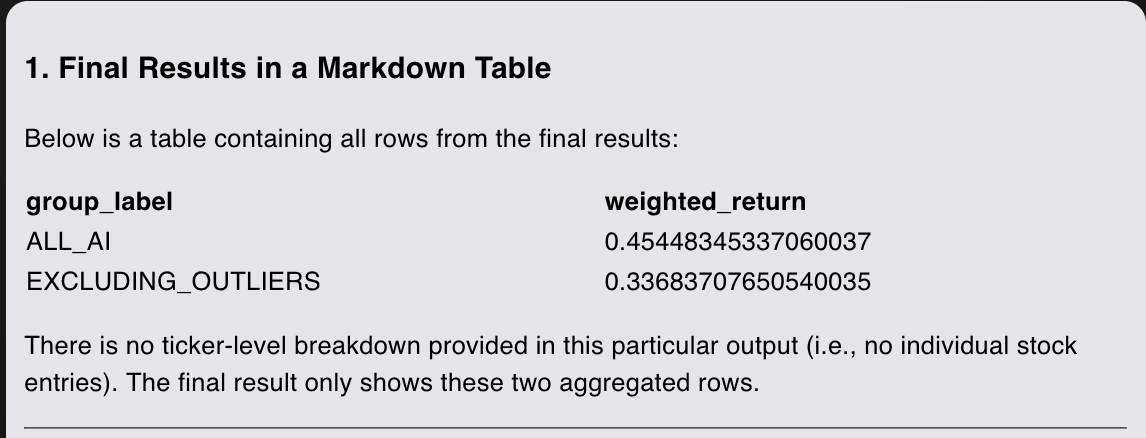

The analysis shows that market-cap weighted returns for AI stocks were 45% on average and 33% excluding outliers.

I can’t reiterate enough – I retrieved this answer in minutes. Even an experienced, highly intelligent SQL expert would likely need 30 minutes to several hours to write the same query.

I am dumbfounded. I couldn’t even perform the same analysis on Claude. I would need to do some complex prompt engineering, advanced techniques like retrieval-augmented-generation, and god-forbid fine-tuning.

But with O1, I can just ask my question.

And it is just correct, out-of-the-box, no crazy prompt engineering required, on its very first try. Don’t believe me? You can see the full conversation for yourself here. I’ve also listed the queries generated in the Appendix below.

Concluding Thoughts

For some reason, we moved the goalpost on what artificial super intelligence means.

If we use our common sense, we know that it means an AI model that's smarter than smart human beings.

And I don't know about you, but I personally know maybe one or two people that is smart enough to write a database query complex as OpenAI’s O1 model.

I mean, look at the query. Unlike Claude 3.5 Sonnet’s response, it was correct on the first try, and we could ask exceedingly more difficult questions.

Now, I'm not going to claim that this is perfect. Because of the significant increase in accuracy, we might be more prone to trusting the results out of the box whereas we may have applied more scrutiny with other models.

That is objectively a bad thing — the costs for mistakes for this model are higher because we hold it to a higher standard.

But objectively, this is a good problem to have. The OpenAI O1 model is super intelligent, but every reasonable definition of the words.

And this is the dumbest it’ll ever be.

The traditional role of financial analysts is over. We don’t need to hire an army of data scientists, whose only role is to write queries to a database.

We can simply use a large language model. They are faster, cheaper, and honestly, more accurate.

The world will be a much scarier place in 2026.

Thank you for reading! As of January 5th, 2025, there are VERY few platforms that allow you to utilize OpenAI O1 for financial research. In my research, I found literally one: my app NexusTrade.

This is your chance to uncover REAL patterns in the stock market effortlessly. Create a free account on NexusTrade, and use data to have the most profitable year investing that you have ever seen.

Thousands of already have. Why haven’t you?

Appendix

The following appendix shows the exact queries and thought processes generated by the model. These are important for verifying the claims I make in this article, but wouldn’t flow well to insert into the middle of the article.

1. Claude 3.5 Sonnet query for “what is the average return of AI stocks in the past year”

2. OpenAI O1 query for “what is the average return of AI stocks in the past year”

3. OpenAI O1 query for “what about these two groups: all AI stocks and all AI stocks excluding outliers, with returns weighted by market cap”

|

Aurora's Insights Subscribe to Aurora's InsightsStay ahead of the market with AI-powered analysis. No spam. Unsubscribe anytime. By subscribing, you agree to our Privacy Policy. You're subscribed!Finish creating your account to backtest strategies, deploy to live markets, and access every article. |

No comments yet.