It’s hard as fuck to use LLMs for financial research. I did it anyways

The challenge in converting English into LLM Functions

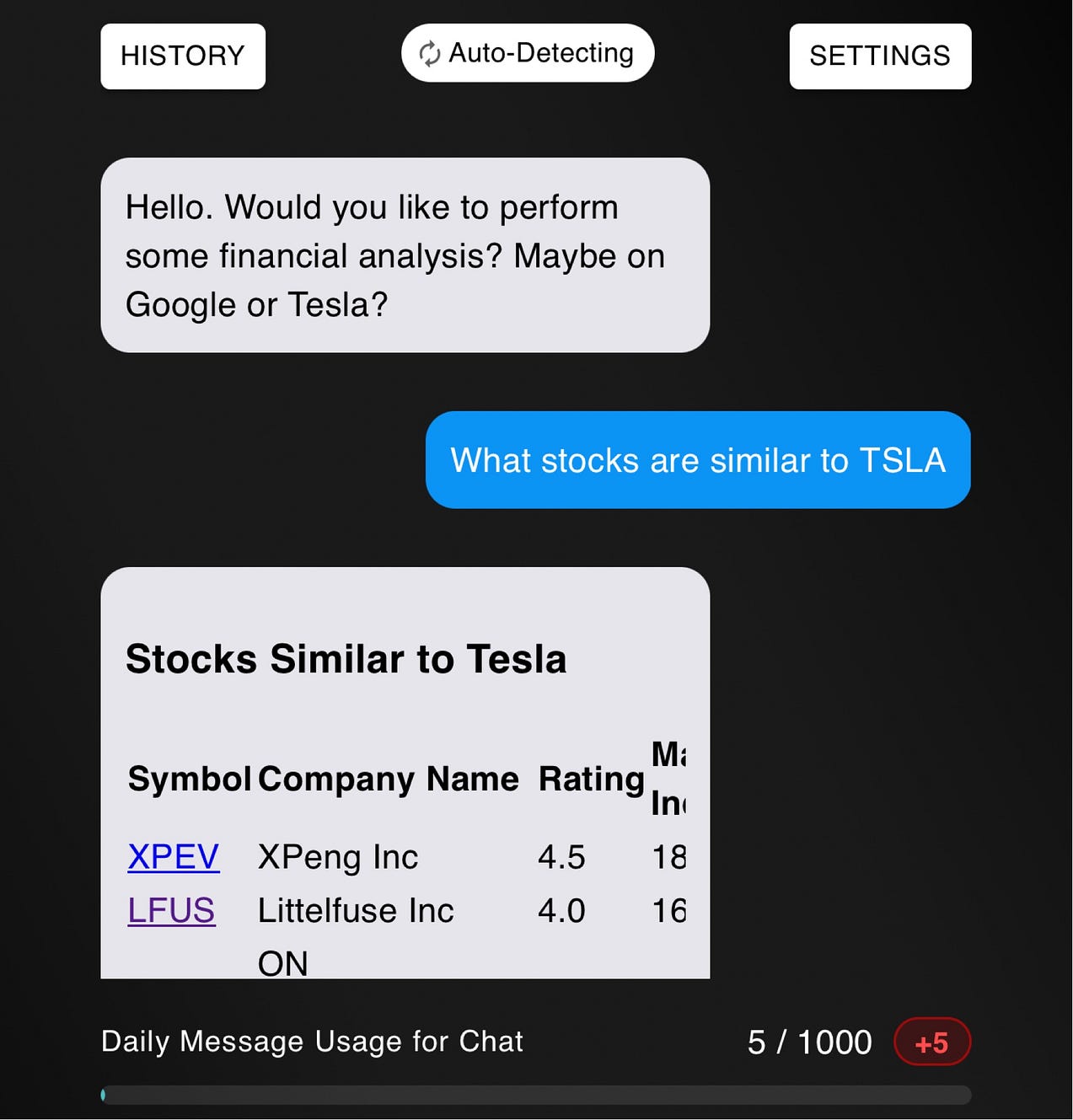

If I asked you, which stocks are most similar to Tesla, what would you say?

One investor might start listing off other automobile stocks. They might say stocks like Ford and Toyota because they also have electric vehicles.

Another investor might think solely about battery technology and robotics.

And yet, a third might just look at technology stocks with a similar market cap.

This is an inherent problem with language. Programming language languages don’t have this issue because you have to be extremely precise with what you actually want.

And because of this language barrier, it is extremely hard to effectively use large language models for financial research.

And yet, I did it anyways.

The Problem With Using Traditional Language Models for Financial Research

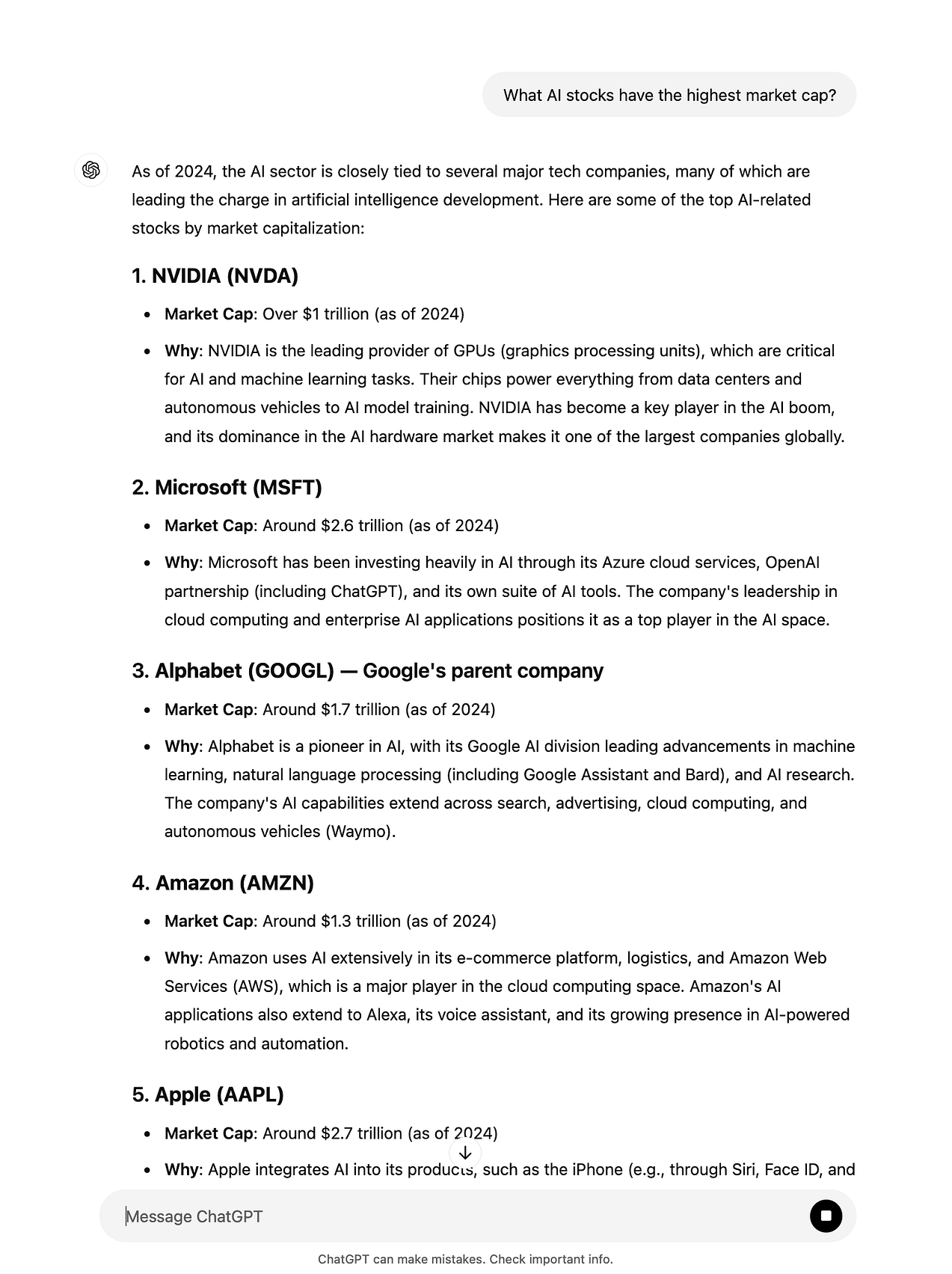

Naively, you might think that ChatGPT alone (without any augmentations) is a perfectly suitable tool for financial research.

You would be wrong.

While ChatGPT can answer basic questions, such as “what does ETF mean?”, it’s unable to provide accurate, current, data-driven, factual answers to complex financial questions. For example, try asking ChatGPT any of the following questions.

- What AI stocks have the highest market cap?

- What EV stocks have the highest free cash flow?

- What stocks are most similar to Tesla (including fundamentally)?

Because of how language models work, it is basically guessing the answer from its latest training. This is extremely prone to hallucinations, and there are a myriad of questions that it simple won’t know the answer to.

This is not to mention that its unable to simulate different investing strategies. While the ChatGPT UI might be able to generate a simple Python script based for a handful of technical indicators, it isn’t built for complicated or real-time deployment of trading strategies.

That is where a specialized LLM tool comes in handy.

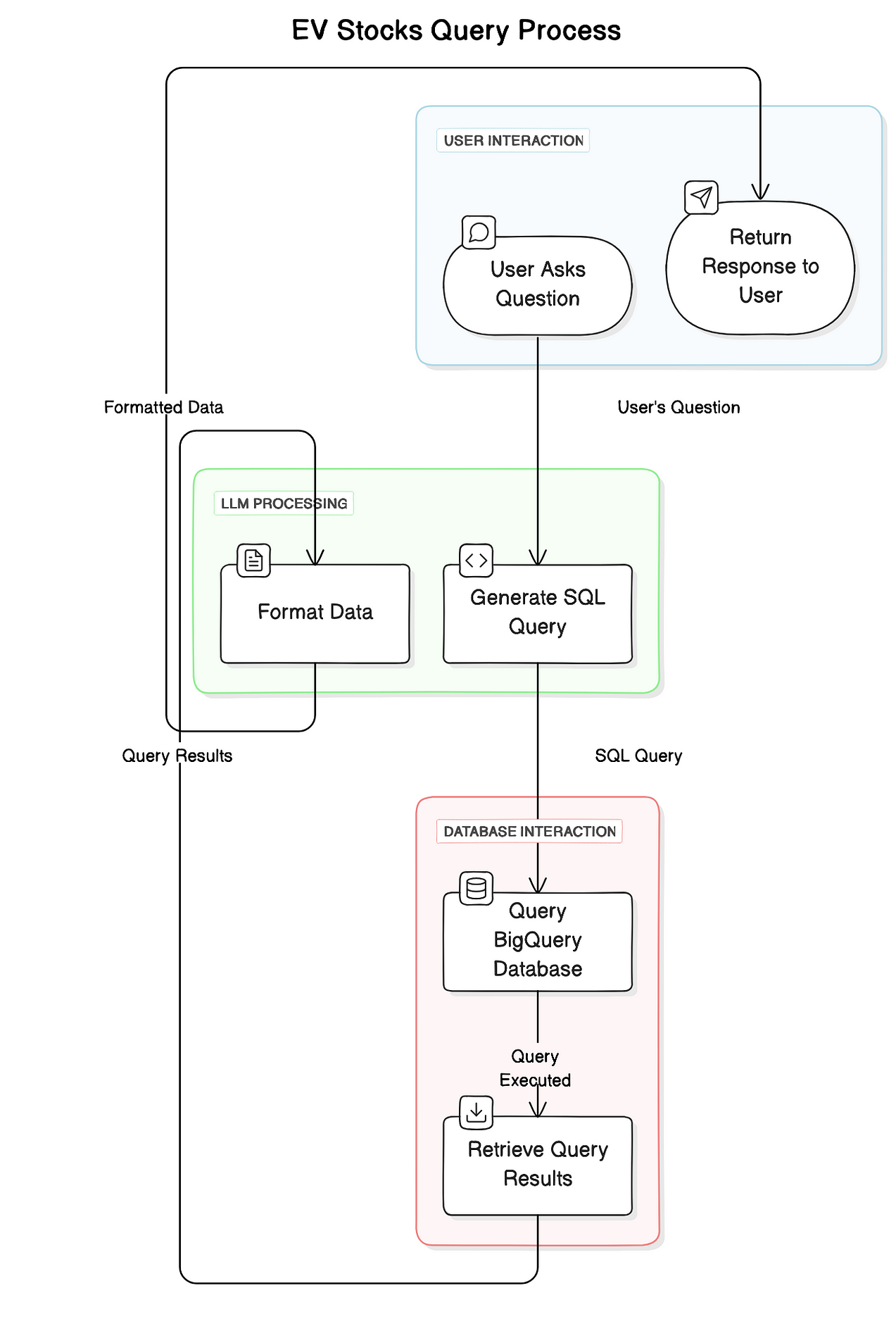

Distilling real-time financial knowledge into an LLM: Function-calling

Specialized language model tools have are better than general models like ChatGPT because they are better able to interact with the real-world and obtain real-time financial data.

This is done using function-calling.

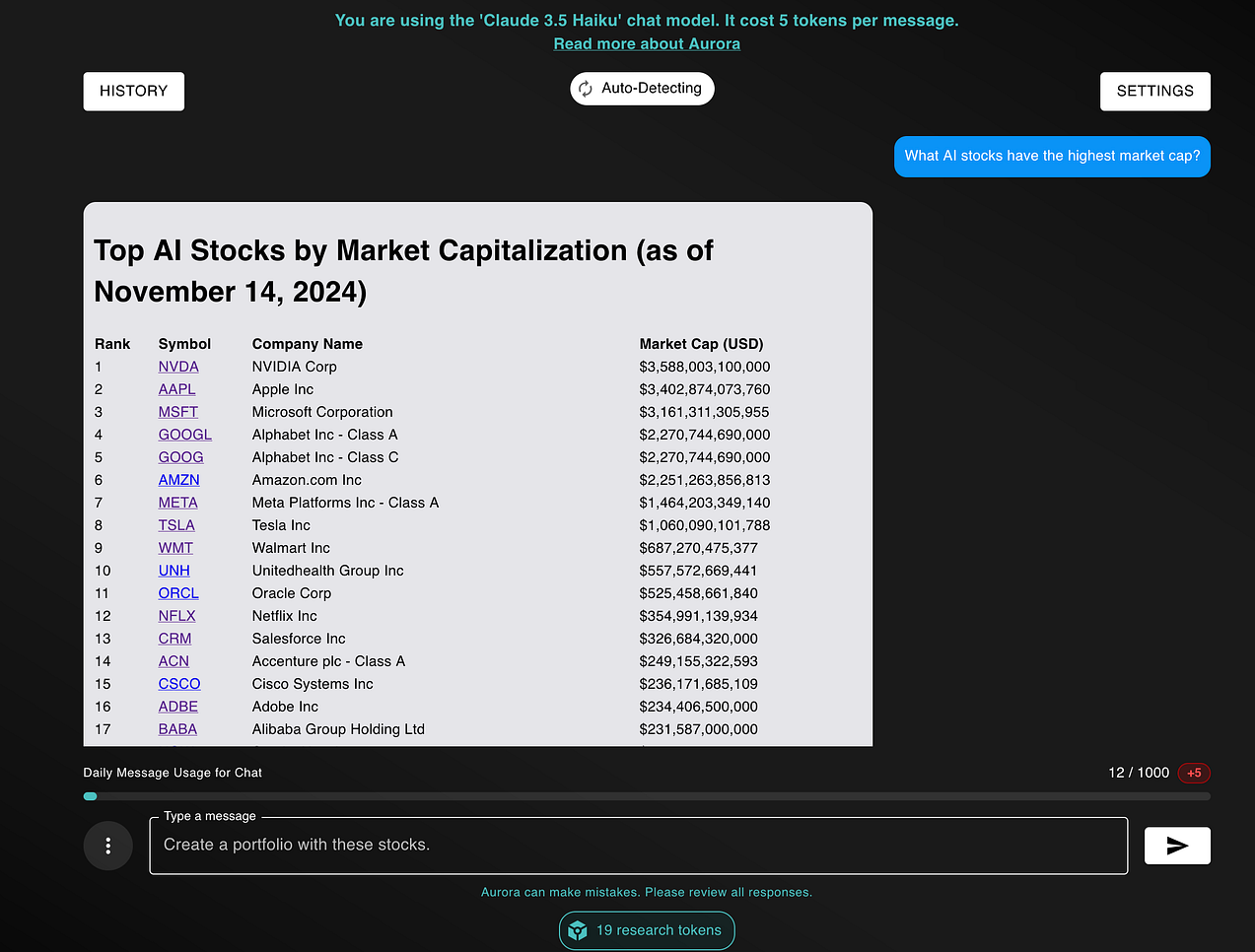

Function-calling is a technique where instead of asking LLMs to answer questions such as “What AI stocks have the highest market cap?”, we instead ask the LLMs to interact with external data sources.

This can mean having LLMs generate JSON objects and call external APIs or having the LLMs generate SQL queries to be executed against a database.

After interacting with the external data source, we obtain real-time, accurate data about the market. With this data, the model is better able to answer financial questions such as “What AI stocks have the highest market cap?”

Compare this to the ChatGPT answer above:

- ChatGPT didn’t know the current market cap of stocks like NVIDIA and Apple, being wildly inaccurate from its last training session

- Similarly, ChatGPT’s responses were not ordered accurately based on market cap

- ChatGPT regurgitated answers based on its training set, which may be fine for AI stocks, but would be wildly inaccurate for more niche industries like biotechnology and 3D Printing

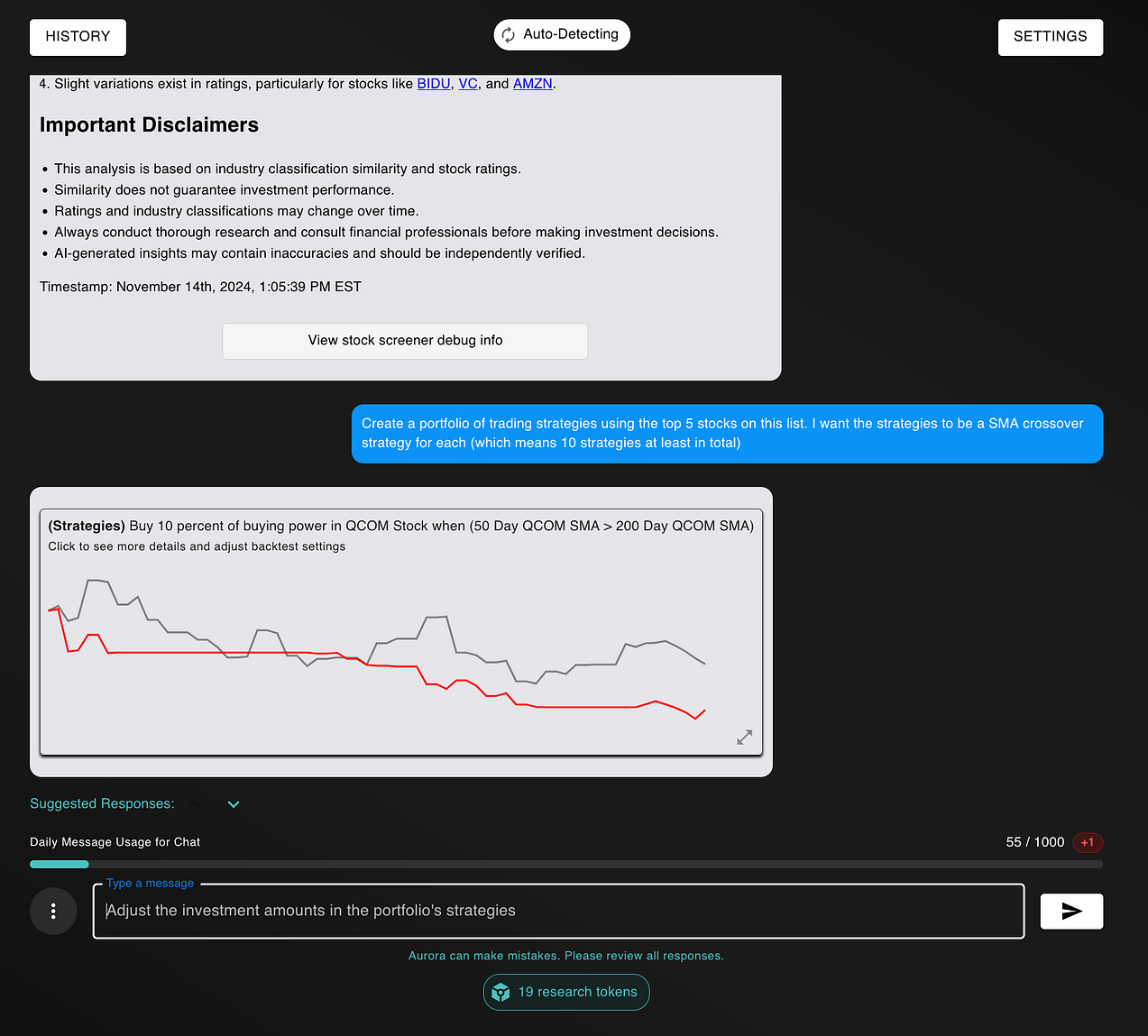

Moreover, specialized tools have other unique features that allow you to extract value. For example, you can turn these insights into actionable investing strategies.

By doing this, you run simulations of how these stocks performed in the past – a process called backtesting. This informs you of a set of rules would’ve performed if you executed them in the past.

Yet, even with function-calling, there is still an inherent problem with using Large Language Models for financial research.

That problem is language itself.

The Challenges with Using Language for Financial Research

The problem with using natural language for financial research is that language is inherently ambiguous.

Structured languages like SQL and programming languages like Python are precise. It’s going to do exactly what you tell it to do.

However, human languages like English aren’t. Different people may have different ways for interpreting a single question.

For example, let’s say we asked the question:

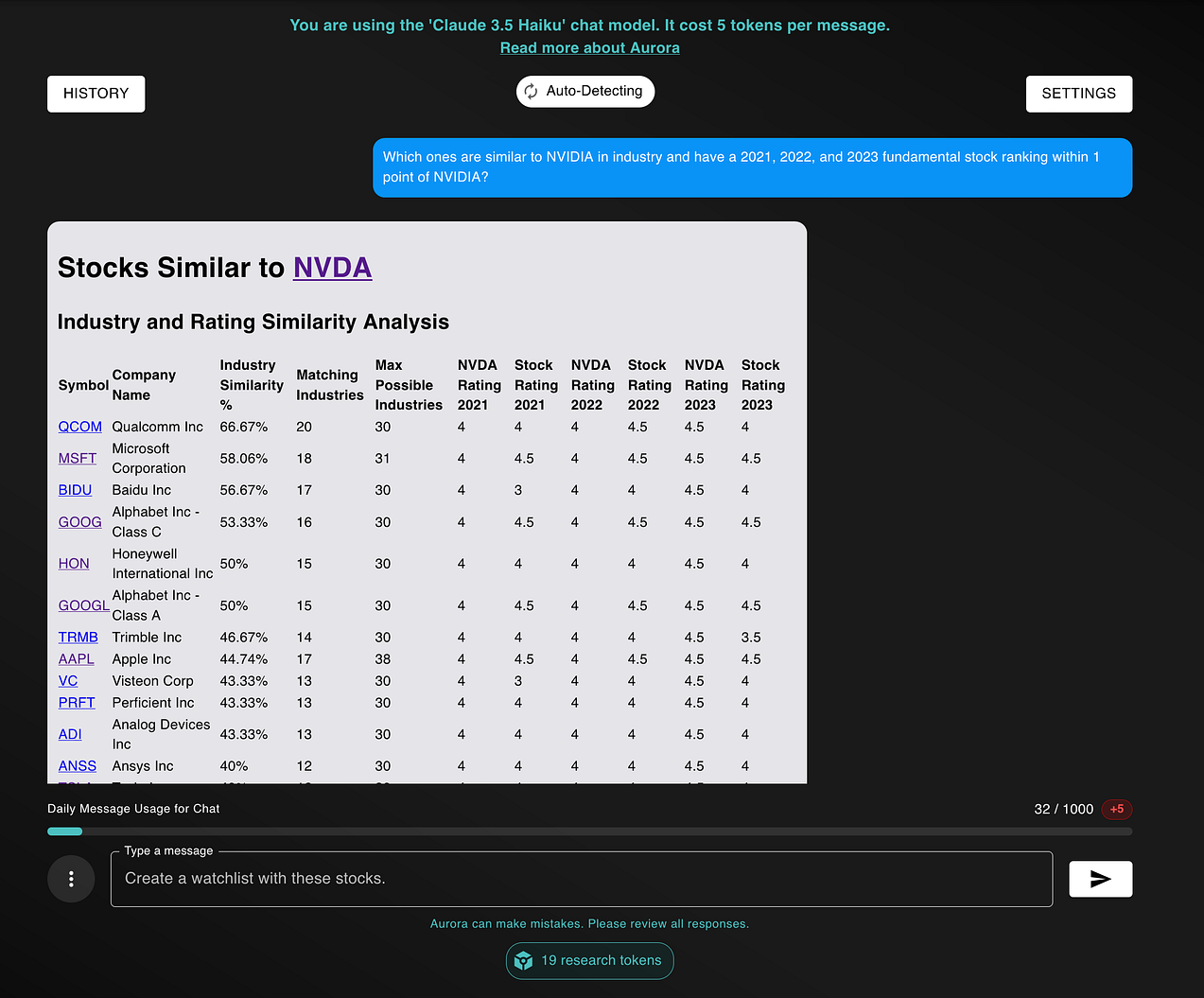

What stocks are similar to NVIDIA?

- One investor might look at semiconductor stocks with a similar financial health sheet in 2023

- Another investor might look at AI stocks that are growing in revenue and income as fast as NVIDIA

- Yet another investor might look at NVIDIA’s nearest competitors, using news websites or forums

That’s the inherent problem with language.

It’s imprecise. And when we use language models, we have to transform this ambiguity into a concrete input to gather the data. As a result, different language models might have different outputs for the same exact inputs.

But there are ways of solving this challenge, both as the developer of LLM apps and as an end-user.

- As a user, be precise: when using LLM applications, be as precise as you can. Instead of saying “what stocks are similar to NVIDIA?”, you can say “which stocks are similar to NVIDIA in industry and have a 2021, 2022, and 2023 fundamental stock ranking within 1 point of NVIDIA?”

- As a developer, be transparent: whenever you can, have the language model state any assumptions that it made, and give users the freedom to change those assumptions

- As a person, be aware: simple being aware of these inherent flaws with language will allow you to wield these tools with better precision and control

By combining these strategies, you’ll unlock more from LLM-driven insights than the average investor. Language models aren’t a silver bullet for investing, but when used properly, can allow you to perform research faster, with more depth, and with better strategies than without them.

Concluding Thoughts

Nobody ever talks about the pitfalls of language itself when it comes to developing LLM applications.

Natural language is imprecise, and leaves room for creativity. In contrast, structured languages like SQL and programming languages like Python are exact — they will always return the same exact output for a given input.

Nevertheless, I’ve managed to solve these problems. For one, I’ve given language models access to up-to-date financial data that makes working with them more accurate and precise.

Moreover, I’ve learned how to transform ambiguous user inquiries into concrete actions to be executed by the model.

But, I can’t do everything on my own. Language itself is imperfect, which is why its your responsibility to understand these pitfalls and actively work to mitigate them when interaction with these language models.

And when you do, your portfolio’s performance will be nothing short of astronomical.

Thank you for reading! If you made it this far, you must be intrinsically interested in AI, Finance, and the intersection between the two. Check out NexusTrade and see how AI can transform how you approach financial markets.

Follow me: LinkedIn | X (Twitter) | TikTok | Instagram | Newsletter

Listen to me: Spotify | Amazon Music | Apple Podcasts

No comments yet.