Claude Opus 4 Approved My Mission-Critical Code. It Contained a Critical Bug.

The Unspoken Dangers of Relying on Language Models

AI makes it too easy to use too little brain power. And if I was lazy and careless, I would’ve paid the price.

But let’s rewind a bit.

My name is Austin Starks, and I’m building an app called NexusTrade.

NexusTrade is like if ChatGPT had a baby with Robinhood, who grew up to have a baby with QuantConnect. In short, it’s a platform that allows everybody to create, test, and deploy their own algorithmic trading strategies.

While exceptionally good at testing complex strategies that operate at open and close, the platform has a major flaw… if you want to test out an intraday strategy, you’re cooked. I’m working diligently to fix this.

In a previous article, I described the different milestones with the implementation. For Milestone 2, my objective is to implement “intraday-ness” within my indicators for my platform. And, if you observe from the surface-level (i.e, using AI tools), you might assume it’s already implemented! In fact, you can check it out yourself, and see the ingenuity of my implementation.

And if I trusted the surface-level (i.e, the best AI tools on the planet), I would’ve proceeded with the WRONG implementation. Here’s how Claude Opus 4 outright failed me on a critical feature.

Claude 4 Looked At the Implementation and Signed Off!

To implement my intraday indicators, the first step was seeing if the implementation for it that exists is correct. To do this, I asked Claude the following:

What do you think of this implementation? Is it correct? Any edge cases?

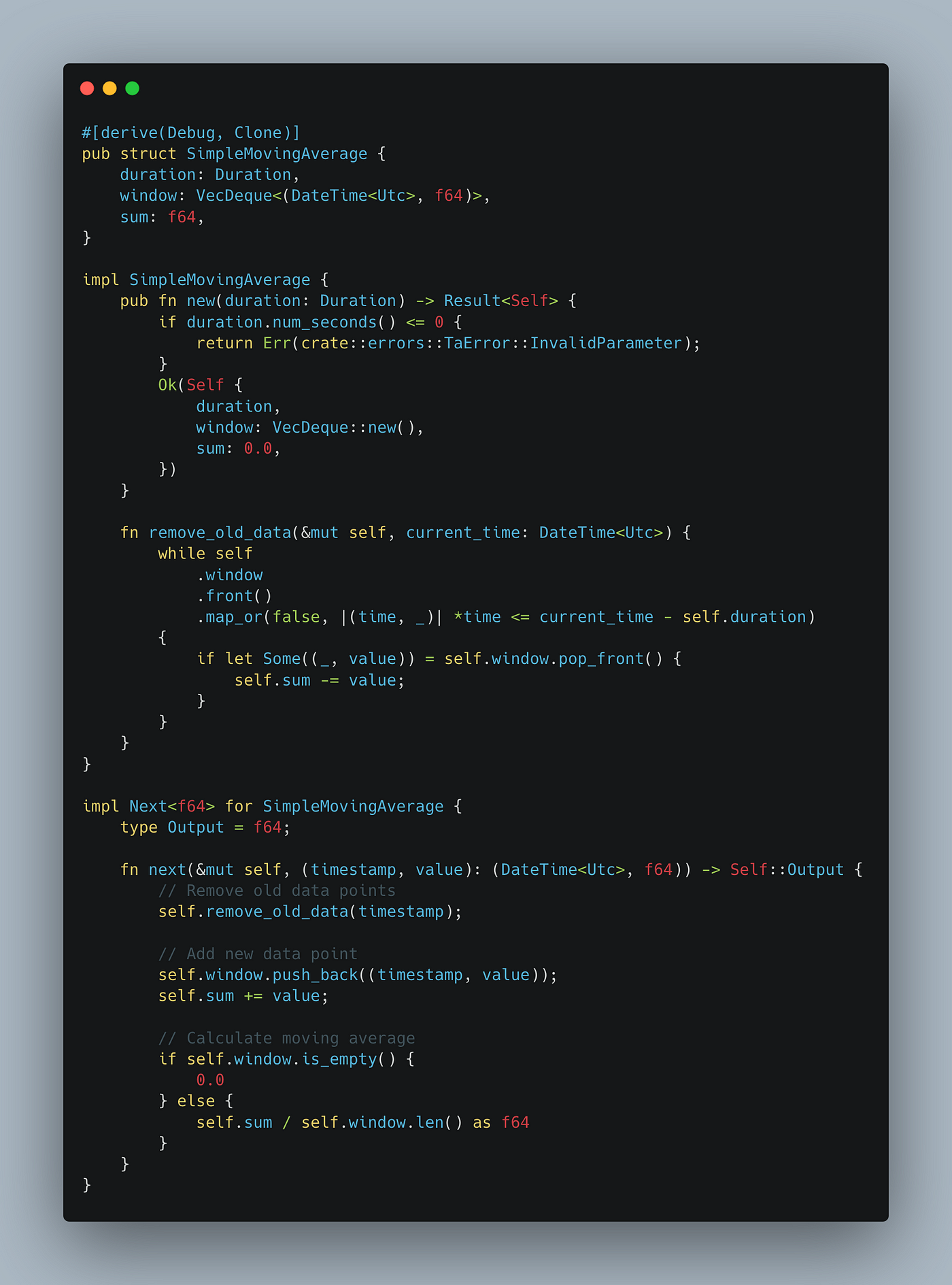

For our overly ambitious engineering reader, here’s the implementation of the Simple Moving Average. See if you can spot the bug yourself.

The way this implementation works is by taking a Duration as the input. This parameter helps us maintain a sliding window and works for any period — 30 days, 30 minutes, or even 30 seconds.

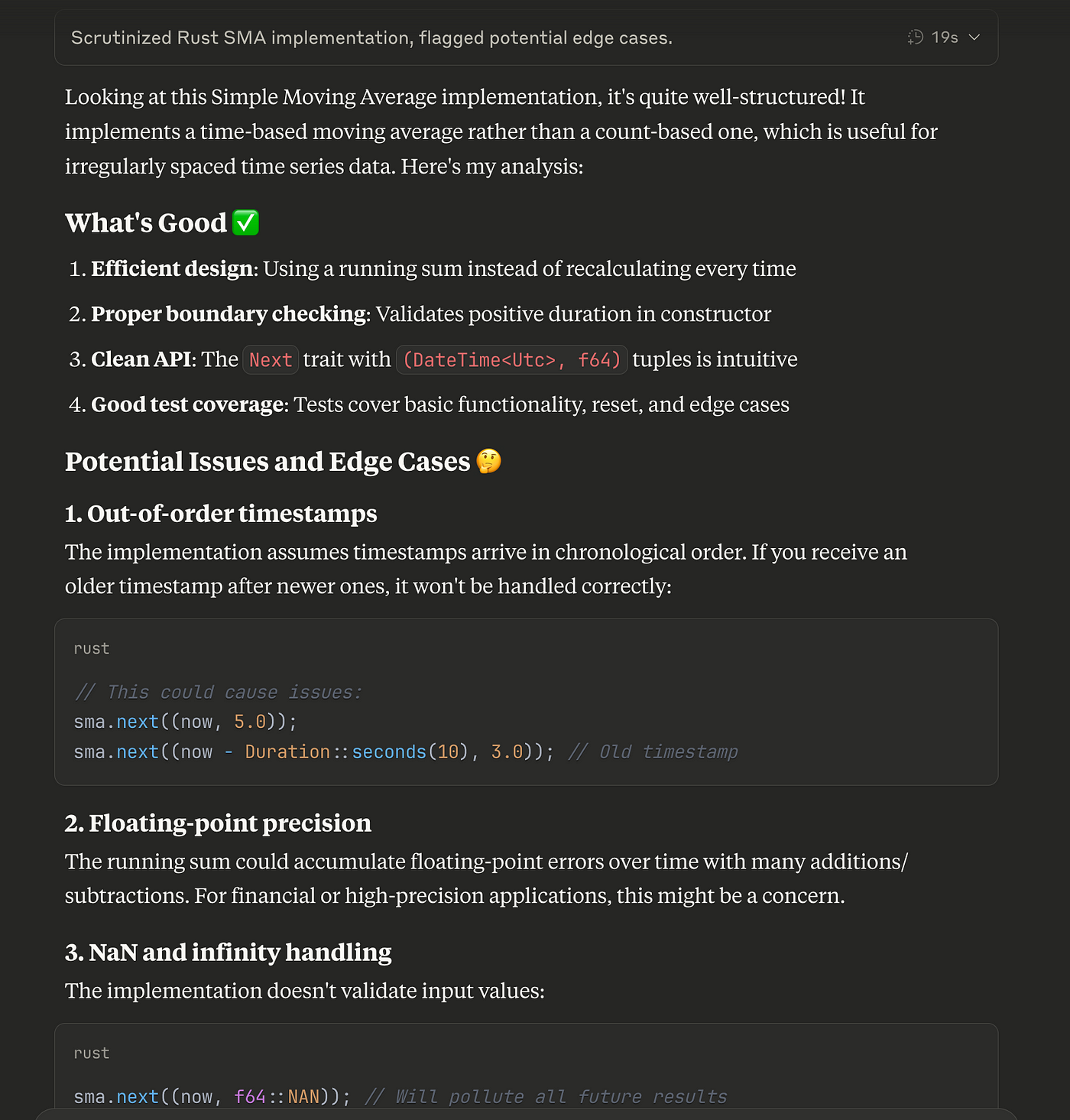

At the surface, the implementation looks correct. Even Claude Opus 4, the most powerful coding LLM of our time, only pointed out nitpicks and unrealistic edge cases.

However, solely because I implemented the technical indicator library, I knew that there existed a hidden weakness. Allow me to explain.

An Ass Out of You and Me: An Unchecked Assumption

On the surface level, the implementation of the intraday indicator looks sound. And it is!

If you make the following assumption: the data is ingested at regular intervals.

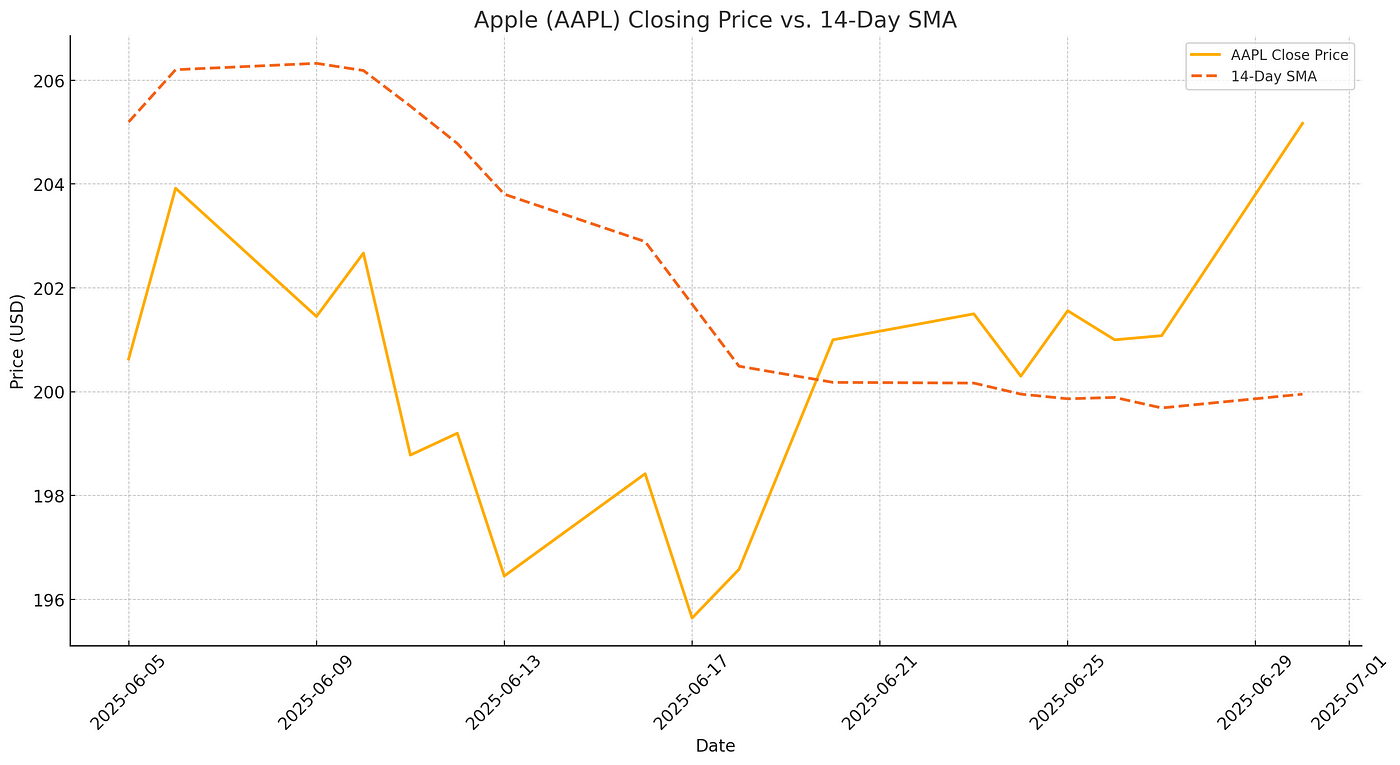

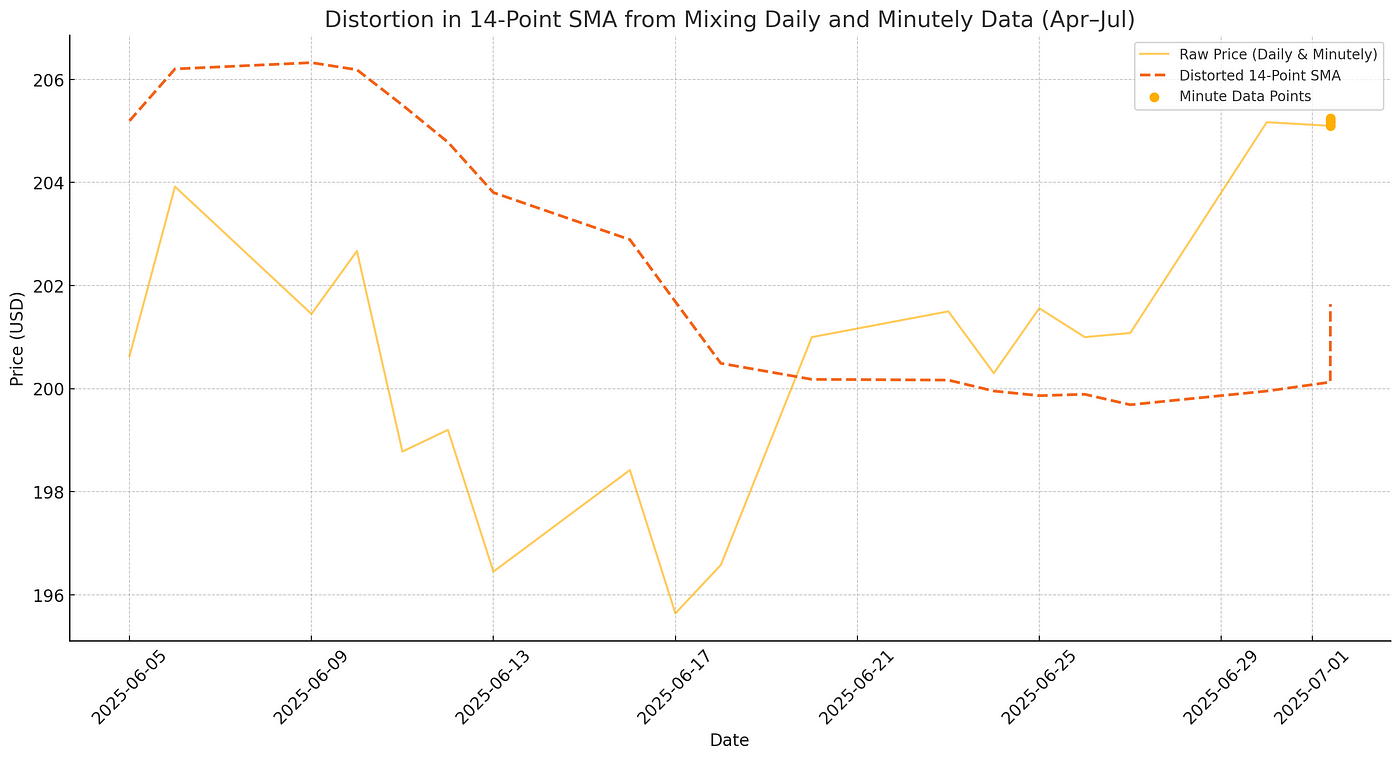

Take this graph for example. It will correctly compute the 14-day SMA across Apple’s closed price because we’re assuming one data-point per day. But what happens if that assumption is violated?

Let’s say we “warmed up” our indicators using open/close data (i.e, computed our moving averages), and now we’re running our backtest on intraday data, which requires ingesting new data points at the minutely granularity.

If we use the current implementation of the indicator, that introduces a major bug.

This graph shows the impact of ingesting just 4 minutes of minutely data into our system. The SMA shoots up rapidly, approaching the current price of Apple.

This is not correct.

The current implementation assumes that each ingested data point should be weighted the same as every other datapoint in the window. This is wrong!

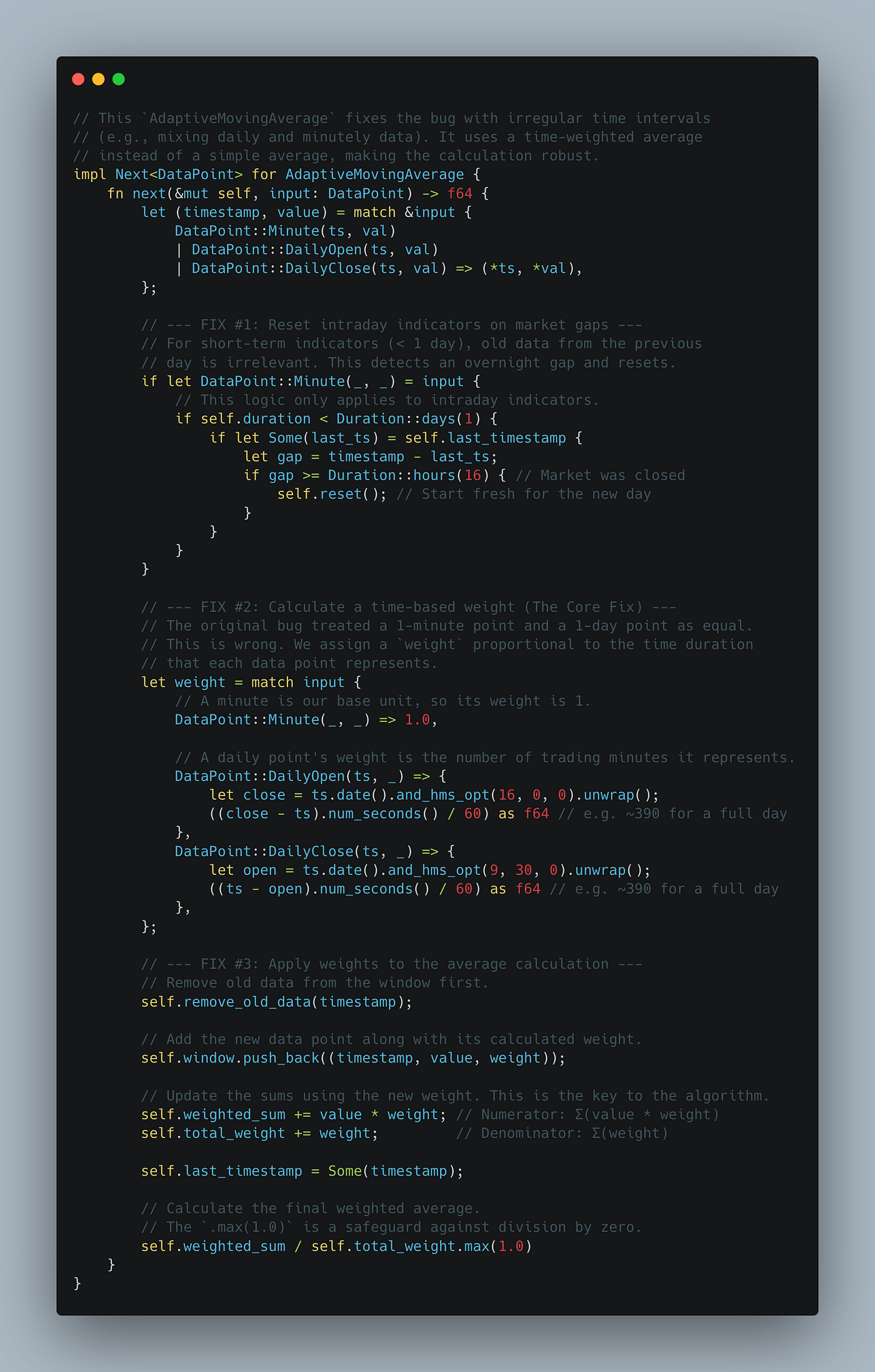

In reality, we need to implement a time-weighted moving average. After some immense brainpower, I ended up developing the following algorithm.

This new implementation is an improvement over the original because:

- It should regress to the original implementation if we’re just ingesting open and closed data

- It automatically resets the minutely averages at the start of the day for stocks

- It maintains the minutely averages for cryptocurrency, which is tradeable all day

Our unchecked assumption would’ve caused a major bug in such a critical feature. What can we learn from this?

If You Replace Knowledgable Engineers with AI, Good Luck!

I’m sharing this story for really one reason: as a cautionary tale for tech executives and software engineers.

And this should go without saying, but I am not some anti-AI evangelist. I literally developed a no-code, AI-Powered trading platform. If there’s anybody having sermons about the power and value of AI, it would be me!

But this article clearly demonstrates something immensely important: you can ask the literal best AI models of our time point blank if an implementation is wrong, and it will tell you no.

Now, this is not entirely the model’s fault. Maybe if I prompted it in such a way that listed every single assumption that can be made, then maybe it would’ve caught it!

But that’s not how people use AI in the real-world. I know it and you know it too.

For one, many assumptions that we make in software are implicit. We’re not even fully aware that we’re making them!

But also, just imagine if I didn’t even write the technical indicator library, and I trusted the authors to handle this automatically. Or, imagine if AI wrote the library entirely, and I never wondered about how it worked under the hood.

The implementation would’ve yielded outright incorrect values forever. Unless someone raised the issue because something seemed off, the bug would’ve laid dormant for months or even longer.

Debugging the issue would’ve been a nightmare on its own. I would’ve checked if the data was right or if the event emitter was firing correctly, and everything else within the core of the trading platform… I mean, why would I double-check the external libraries it depended on?

Catastrophically-silent bugs like this are going to become rampant. Not only do AI tools dramatically increase the output of engineers, but they are notoriously bad at understanding the larger picture.

Moreover, more and more non-technical folks are “vibe-coding” their projects into existence. They’re developing software based on intuition, requirements, and AI prompts, and don’t have a deep understanding of the actual code that’s being generated.

I’ve seen it first-hand, on LinkedIn, Reddit, and even TikTok! Just Google “vibe-coding” and see how popular it has become.

What happens when a “vibe-coded” library is used by thousands of developers, and these issues start infesting all of our software? If I nearly missed a critical bug and I actually wrote the code, how many bugs will exist because code wasn’t written by engineers with domain expertise?

I shudder to think of that future.

So if you’re a tech executive, don’t fire your engineering team yet. They may be more critical now than ever before.

Concluding Thoughts

Maybe my brain is overreacting.

Maybe I would’ve caught this issue well before I launched. I’m just having trouble figuring out how.

In this case, I knew of the limitation because I wrote the library. But there are hundreds of libraries now being created and reviewed purely by AI. Engineers are looking at less and less of the code that is brought into the world.

And this should terrify you.

For backtesting software, the consequences of this bug would’ve been an improper test. Users would be annoyed and leave bad reviews. I would suffer reputational harm. But I would survive.

But imagine such a bug for other, mission-critical software, like rocket ships and self-driving cars.

This article demonstrates why human beings still need to be in the loop when developing complex software systems. It is imperative, that human-beings sanity-check LLM-generated code with domain-aware unit tests. Even the best AI models don’t fully grasp exactly what we’re building.

So before you ship that feature (whose code you barely glanced at), ask yourself this question: what assumption did you and Gemini miss?

Thank you for reading! If you’re interested in how you can use AI to create profitable investment strategies, check out NexusTrade for free today!

No comments yet.